Introduction

You do not need weeks of product discovery to learn the obvious.

Most teams waste time because they start discovery without scope clarity, without a decision owner, and without a plan to turn findings into a build ready backlog.

This guide is a practical system for running a discovery sprint that produces:

- A clear problem statement and success metrics

- A ranked list of assumptions and risks

- A prototype that answers the biggest unknowns

- A backlog you can actually ship from

Insight: If discovery does not change a decision, it is just meetings with notes.

You will see templates, workshop agendas, and a ship ready in 10 days plan. Use it as a discovery sprint template, or steal parts of it for your next kickoff.

What this guide optimizes for

This is built for teams that need to validate fast and then ship.

It assumes:

- You have stakeholders who want answers this month, not this quarter

- Engineering time is expensive and limited

- You want evidence, not opinions

It does not assume:

- Perfect access to users

- A mature research function

- A greenfield product team

Discovery sprint template

One page you can paste into your docUse this as your discovery sprint template header:

- Problem statement:

- Target user:

- Context:

- Non goals:

- Success metrics:

- Top 10 assumptions:

- Top 5 risks:

- Validation plan:

- Decision owner:

- Day 10 decision meeting time:

Tip: keep it to one page. Add links, not paragraphs.

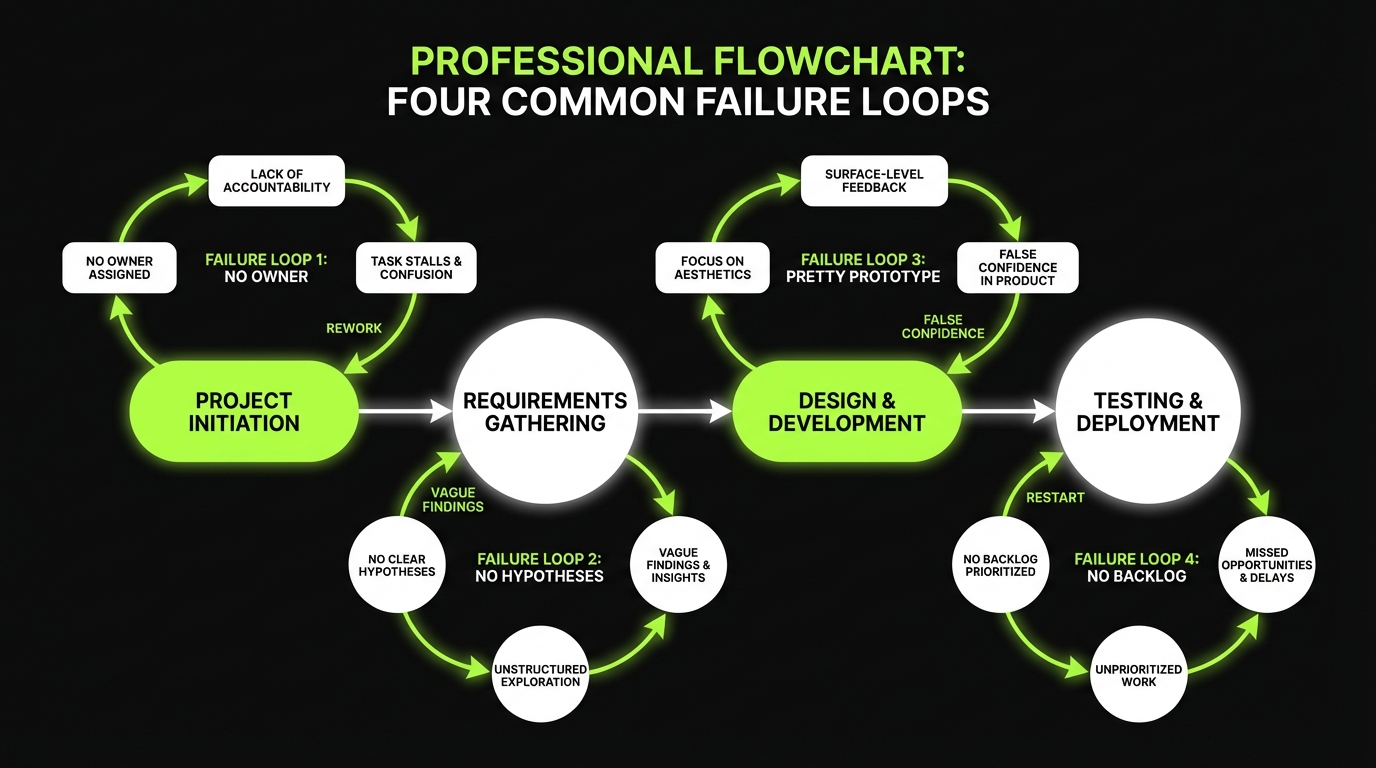

Why discovery drags on

Discovery runs long for predictable reasons. Fix those and the sprint gets shorter.

Common failure modes:

- No decision maker. Everyone can veto, nobody can commit.

- Research without a hypothesis. You collect quotes, not signal.

- Prototypes that look good but test nothing important.

- Outputs that are not buildable. Lots of slides, no backlog.

Key Stat: In our delivery work across 360+ projects, the fastest teams are not the ones with more meetings. They are the ones with tighter decisions and smaller test loops.

Here is the trade off to accept: speed comes from constraints. You will not explore every idea. You will answer the few questions that unblock build.

What scope clarity looks like

You want scope clarity at three levels:

- User scope: which user segment and context you are designing for

- Problem scope: which job to be done, and what is out of scope

- Solution scope: what you will and will not build in the first release

A quick test:

- If you cannot write a one sentence problem statement, you are not ready to workshop.

- If you cannot define a success metric, you are not ready to prototype.

Insight: The goal is not to be right. The goal is to be specific enough to be wrong quickly.

_> Discovery sprint proof points

Numbers we use to keep discovery honest

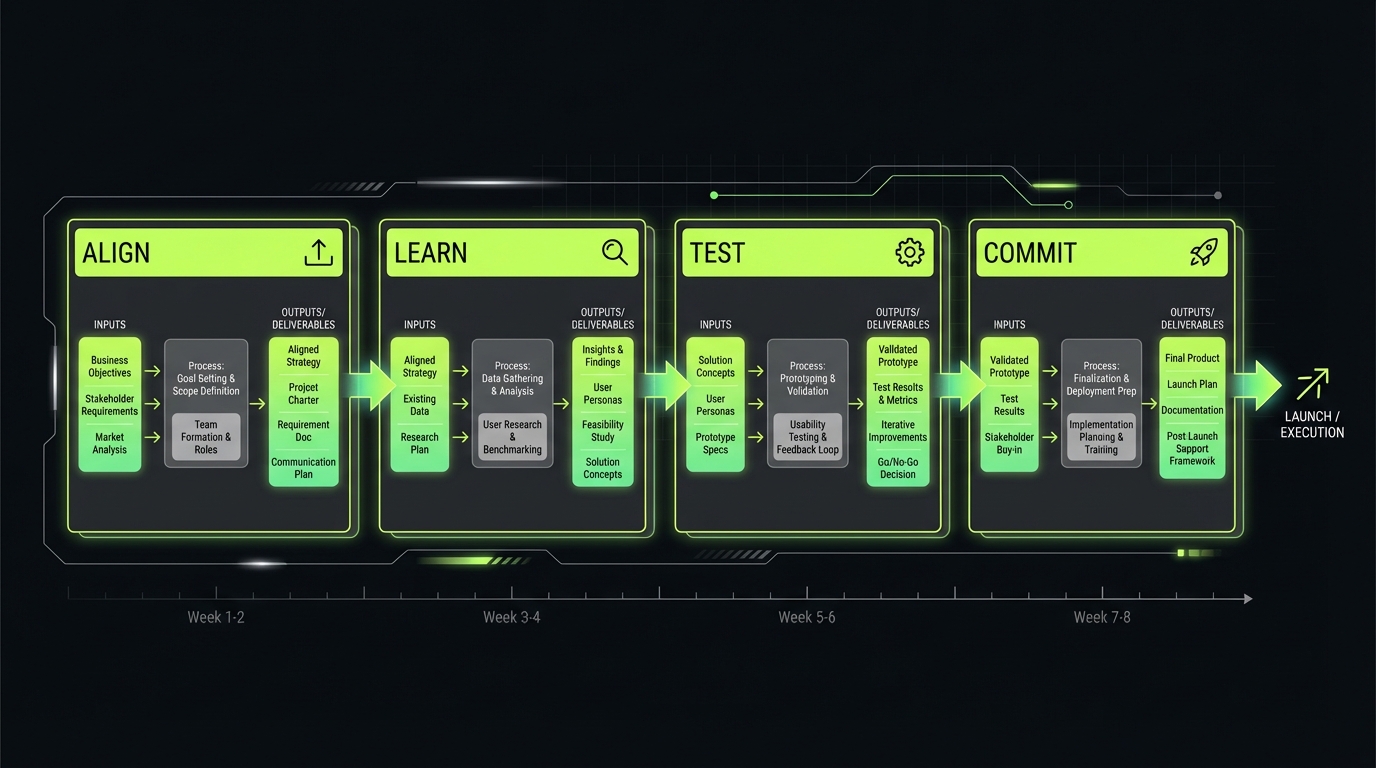

Discovery sprint flow

_> Four phases, tight outputs

→ Scroll to see all steps

Discovery sprint structure and outcomes

A discovery sprint should be short, opinionated, and output driven. Below is a structure we use when the goal is fast validation and a build ready plan.

Core outcomes

You are done when you have:

- Decision: go, no go, or pivot with a clear reason

- Assumptions: ranked by risk and test cost

- Prototype: good enough to test the top 3 assumptions

- Backlog: epics and acceptance criteria for the first release

- Decision log: what you decided, what you deferred, and why

Insight: If the sprint does not end with a decision, it will leak into delivery as churn.

Product discovery workshop agenda

Use this product discovery workshop agenda as a default. It is designed for a 2 day workshop, followed by research and validation.

Day 1 (2.5 to 3.5 hours)

- Context and constraints (15 min)

- Budget, timeline, compliance constraints

- Problem framing (30 min)

- Who, what job, what pain, why now

- Current journey mapping (45 min)

- Steps, drop offs, workarounds

- Assumptions dump (30 min)

- Value, usability, feasibility, viability

- Risk ranking (30 min)

- Impact vs uncertainty

- Success metrics (20 min)

- One primary metric, two supporting metrics

Day 2 (2.5 to 3.5 hours)

- Solution sketching (45 min)

- 2 to 3 competing approaches

- Prototype scope (30 min)

- What to test, what to fake

- Validation plan (45 min)

- Participants, scripts, tasks, pass fail

- Backlog outline (45 min)

- Epics, dependencies, definition of done

- Decision gates (20 min)

- What would make us stop

Checklist before you invite people:

- Named decision owner

- Written problem statement draft

- Known user segment

- Access plan for 5 to 8 participants

- Prototype tool and owner

Workshop roles

Keep the room smallA good discovery room has clear roles:

- Decision owner: final call

- Facilitator: keeps time and resolves ambiguity

- Product lead: owns problem and backlog

- Tech lead: feasibility and spikes

- Designer: prototype and journey mapping

- Researcher or PM: interviews and synthesis

Rule: if someone cannot contribute to a decision, they do not need to be in every session.

User research shortcuts with high signal

You do not need 30 interviews to find the first 3 deal breakers.

Test Assumptions, Not UI

Pass or fail criteriaStart by writing assumptions in four buckets: value, usability, feasibility, viability. Then pick the top 3 by impact and uncertainty. A test plan that does not waste days:

- Define pass fail evidence for each assumption (if you cannot, you are not ready to test)

- Build the smallest prototype that can fail

- Run 5 to 8 sessions with realistic tasks

- Decide and log it the same day

Prototype choice is a trade:

- Clickable wireframes: fast for flow, misses edge cases

- Concierge / Wizard of Oz: good for value and AI like behavior, hides scale and ops cost

- Thin vertical slice: best for integration risk, slower to build

AI specific checks (treat as product requirements): correctness (answers match sources) and safety (policy breaking outputs). In one AI avatar build we saw latency and stream handling were not “details”; they were the product. Measure response delay and task completion, not UI polish.

The point of research in a sprint is to reduce uncertainty fast. That means you pick methods with high signal per hour.

High signal shortcuts:

- 5 to 8 interviews with a tight screener

- Support ticket review for recurring pain and language

- Sales call shadowing for objections and buying triggers

- Session replays for where users hesitate or rage click

- Competitor teardown focused on flows, not features

Key Stat: A common pattern we see: usage spikes after a demo, then support tickets climb. That gap shows up when teams measure output (features shipped) instead of outcomes (tasks completed, fewer tickets, lower churn).

When AI is involved, add one more shortcut: review failure cases. Wrong answers, missing sources, latency spikes. Those are product problems, not just model problems.

In our work on AI heavy products, we treat AI behavior as a product surface with its own acceptance criteria and QA gates. If you skip that in discovery, you pay for it later in production.

Interview script that stays focused

Keep the script short. You are hunting for behavior, not opinions.

Use these prompts:

- Walk me through the last time you tried to do X.

- What did you try first?

- Where did you get stuck?

- What did you do instead?

- What would make you stop using this?

Avoid:

- Would you use this?

- How much would you pay?

- Do you like this design?

Add one quant question at the end:

- If this solved X, how often would you do it per week?

That number helps you estimate value and prioritize.

Assumptions scoring rubric

Score each assumption from 1 to 5.

- Impact: if wrong, how bad is it?

- Uncertainty: how likely are we wrong?

- Test cost: how hard is it to test?

Prioritize:

- High impact, high uncertainty, low test cost

Deprioritize:

- Low impact assumptions, even if uncertain

- High cost tests unless they unblock the whole product

Artifacts that prevent churn

_> Keep them lightweight and linkable

Journey snapshot

A 6 to 10 step view of the current workflow with friction points and evidence.

Assumptions list

Ranked by impact and uncertainty, with test method and owner.

Risk map

A simple impact vs uncertainty grid that drives what you test first.

Decision log

One source of truth for what was decided, why, and what would change it.

Prototype link

A single prototype that maps to assumptions, not a gallery of screens.

Build ready backlog

Epics, stories, acceptance criteria, and time boxed spikes for unknowns.

Prototype validation and assumption testing

Prototypes fail when they are built to impress stakeholders.

Why Discovery Drags

Four predictable loopsDiscovery runs long for boring reasons. Fix the system, not the calendar. Common loops and fixes:

- No decision maker → rework. Fix: name an owner who can commit. Everyone else advises.

- No hypothesis → vague findings. Fix: write what you believe and what would change your mind.

- Pretty prototype → false confidence. Fix: prototype to break assumptions, not to impress.

- No buildable output → restart. Fix: end with backlog items and acceptance criteria.

Based on delivery work across 360+ projects, the fastest teams are not the ones with more meetings. They run tighter decisions and smaller test loops. The trade off: you will not explore everything. You answer the few questions that unblock build.

A sprint prototype has one job: test assumptions. Not all assumptions are equal, so start with a clear list.

Assumption types to write down

- Value: users care enough to switch behavior

- Usability: users can complete the key task without help

- Feasibility: engineering can build it within constraints

- Viability: it works for pricing, legal, security, support

Insight: The fastest way to slow down delivery is to validate the wrong thing.

How to validate product idea fast

Run tests that produce pass fail evidence.

- Pick the top 3 assumptions by impact and uncertainty

- Define what success looks like for each

- Build the smallest prototype that can fail

- Run 5 to 8 sessions with realistic tasks

- Decide and log it the same day

A useful rule: if you cannot write a pass fail criterion, you are not ready to test.

Prototype fidelity guide

| Prototype type | Best for | What it can miss | Typical build time |

|---|---|---|---|

| Clickable wireframes | Flow comprehension, information order | Performance, edge cases | 0.5 to 2 days |

| Concierge prototype | Value and willingness to pay | Scale, automation complexity | 1 to 3 days |

| Wizard of Oz | AI like behavior without full model | Operational load, failure modes | 2 to 5 days |

| Thin vertical slice | Feasibility and integration risk | Broad usability | 3 to 8 days |

Example: When we built a real time conversational AI avatar in 4 weeks, latency and stream handling were not details. They were the product. Early tests focused on response delay and conversation feel, not UI polish.

If your product includes LLM features, add two more assumption checks:

- Correctness: how often answers match sources

- Safety: how often the system produces unsafe or policy breaking outputs

Treat those as backlog items with measurable gates, not vague goals.

Assumption: Users will trust AI answers enough to act. Pass: 4/6 users complete the task using AI output without manual verification. Fail: More than 2 users ask for sources or switch to manual flow. Next: Add citations, constrain scope, or change UX to require confirmation.Artifact examples you can copy

You need artifacts that are easy to update and hard to misread.

- Journey snapshot

- Actor: who is doing the work

- Trigger: what starts the journey

- Steps: 6 to 10 steps max

- Friction: where time or errors happen

- Evidence: quotes, tickets, analytics

- Assumptions and risks list

- Assumption statement

- Type: value, usability, feasibility, viability

- Risk score: impact x uncertainty

- Test method: interview, prototype, spike

- Owner and date

- Risk map

- High risk, low test cost first

- Low risk, high cost later

Keep it simple. The artifact is a tool for decisions, not a report.

What you get when discovery is tight

Fewer reopened decisions

Teams stop relitigating scope because decisions and trade offs are documented.

Faster onboarding for engineers

A clear backlog and artifacts reduce ramp time and handoff confusion.

Better prototype signal

Tests target the biggest risks, so results change real decisions.

Production ready AI thinking

Correctness, safety, and latency become requirements early, not surprises later.

Turning discovery into a build ready backlog

This is where most discovery work dies. Teams stop at insights.

Discovery That Ends

A sprint is a decisionIf discovery does not change a decision, it is just meetings with notes. Treat the sprint as a short path to a go, no go, or pivot call. Minimum outputs to ship from:

- Decision + reason (write it down the same day)

- Ranked assumptions (risk vs test cost)

- Prototype that tests the top 3 unknowns (not stakeholder polish)

- Backlog with epics + acceptance criteria for release 1

- Decision log (decided, deferred, why)

Failure mode to watch: a “successful” sprint that ends with slides. Mitigation: schedule a final checkpoint up front and make one person the decision owner.

Your goal is a backlog that a self directed engineering team can ship from without re opening every decision.

Backlog conversion rules

- Every epic ties to a user outcome and a metric

- Every story has acceptance criteria and a test note

- Unknowns become spikes with a time box

- AI features get quality gates, not just UI tasks

Insight: If you cannot write acceptance criteria, you do not have a requirement. You have a wish.

Decision log template

Use a decision log to prevent scope drift and stakeholder rewind.

Decision ID: D-014 Date: Decision owner: Context: Options considered: Decision: Why: What we are not doing: Risks accepted: Revisit trigger (metric or event): Links (prototype, tickets, docs):Ship ready in 10 days plan

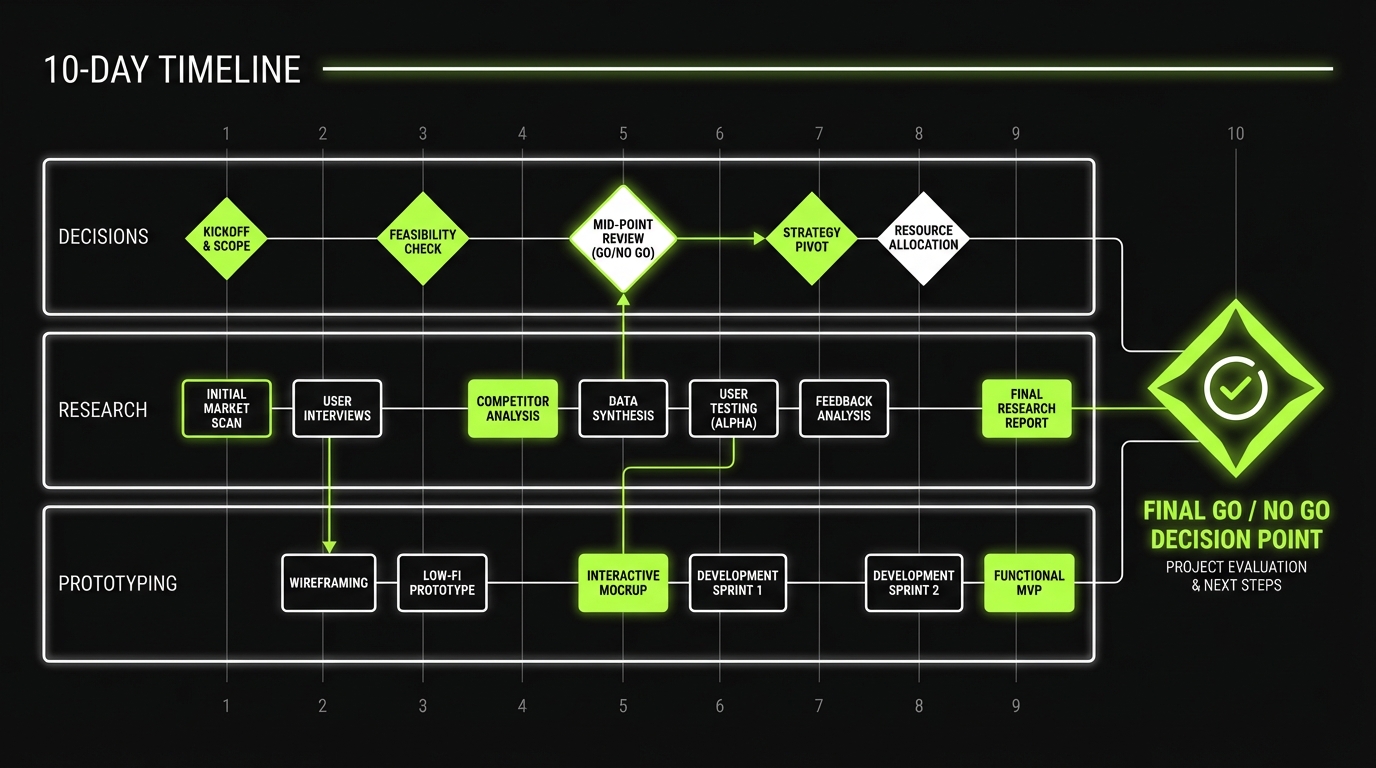

This is a practical plan when you need momentum.

- Day 1: Problem statement, constraints, success metrics

- Day 2: Journey snapshot, assumptions list, risk ranking

- Day 3: Research prep, screener, recruiting, scripts

- Day 4: Interviews 1 to 3, daily synthesis

- Day 5: Interviews 4 to 6, update assumptions

- Day 6: Prototype build, define pass fail criteria

- Day 7: Prototype tests 1 to 3, capture clips

- Day 8: Prototype tests 4 to 6, decide changes

- Day 9: Backlog writing, acceptance criteria, spikes

- Day 10: Decision review, go no go, delivery plan

What can break this plan:

- Recruiting delays

- Stakeholders missing key sessions

- Prototype scope creep

Mitigations:

- Recruit from support and sales lists first

- Schedule decision review on day 10 on day 1

- Time box prototype work and cut polish

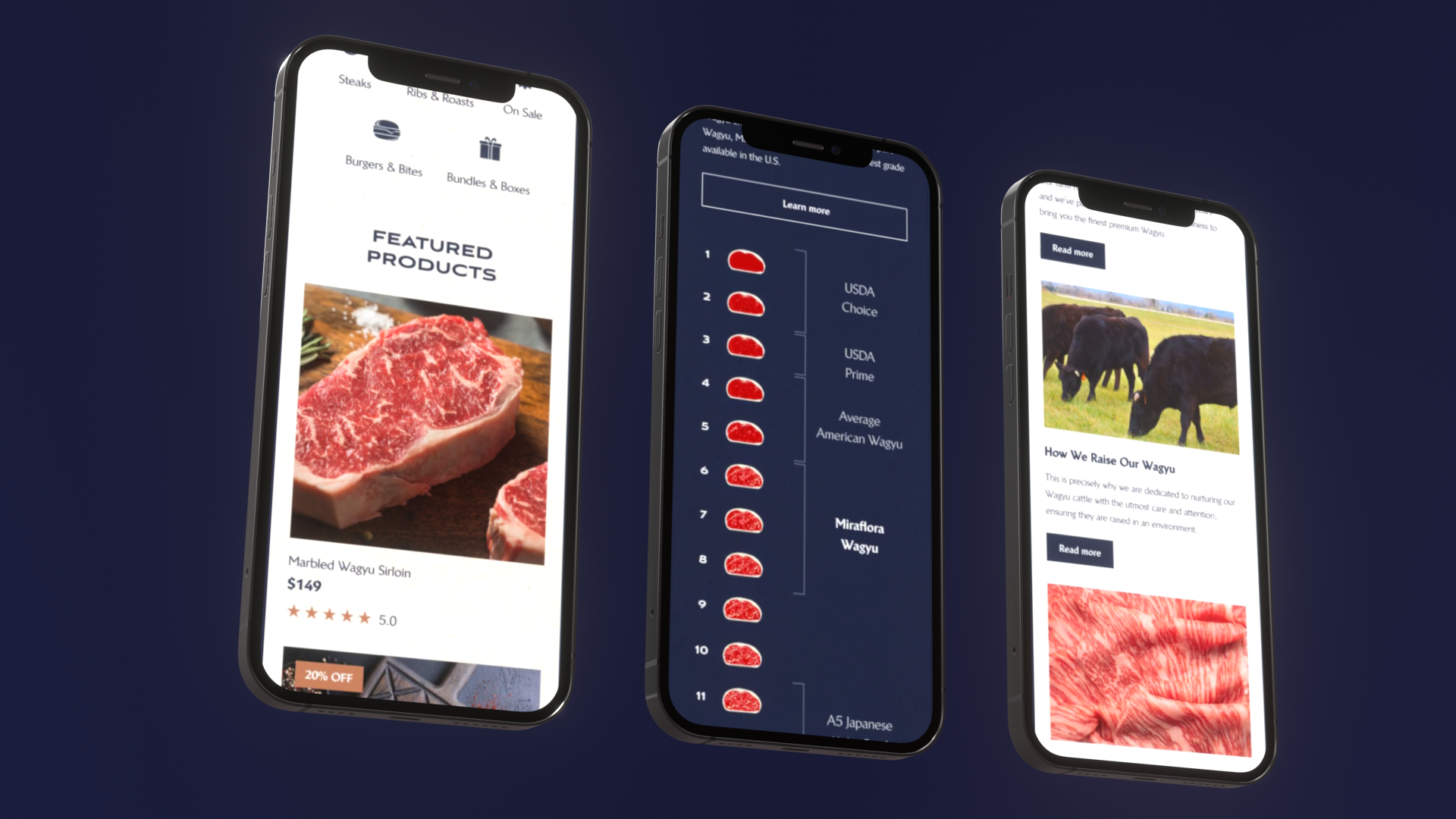

Example: In retail work like Miraflora Wagyu, speed depended on tight async feedback loops across time zones. The same principle applies in discovery. If feedback takes 48 hours, your sprint becomes a month.

Definition of done for discovery

You can call discovery done when you have:

- A one page scope doc with out of scope items

- A ranked assumptions list with test results

- A prototype link and a short findings summary

- A build ready backlog with epics, stories, and spikes

- A decision log with at least 10 entries

If any of these are missing, expect churn once delivery starts.

Conclusion

Fast discovery is not about skipping thinking. It is about shortening the loop between a question and evidence.

If you want a practical starting point, run the 10 day plan once. Then measure what improved.

Track these metrics:

- Days from kickoff to go no go decision

- Number of decisions reopened during build

- Prototype test pass rate on the top 3 assumptions

- Support ticket volume for the shipped feature

Insight: The best discovery sprint is the one that makes delivery boring.

Next steps you can do this week:

- Write your problem statement in one sentence

- Create an assumptions list with risk ranking

- Pick one prototype test that can fail in 48 hours

- Start a decision log and keep it public

Do that, and you will stop wasting weeks without pretending uncertainty does not exist.