Introduction

Most RFPs fail in a predictable way. They describe what you want to buy, but not what it takes to deliver it.

You get polished PDFs. You get confident estimates. Then delivery starts and the gaps show up fast: unclear ownership, weak QA, missing security posture, and AI features that were never evaluated beyond a demo.

This guide gives you a software development RFP template that surfaces real delivery capability. It includes copy ready prompts, a requirements library, AI RFP questions, a vendor selection rubric, and timeline planning with mandatory proof requests.

Insight: If your RFP does not force vendors to show how they ship, you are selecting for writing, not delivery.

Use it as is, or strip it down to the parts that match your risk profile.

What you will walk away with:

- A clear RFP structure that vendors can answer without guessing

- Requirements you can reuse across projects: security, quality, delivery

- A scoring rubric that reduces internal debate

- A timeline plan that requests proof early, not after contracting

Who this is for

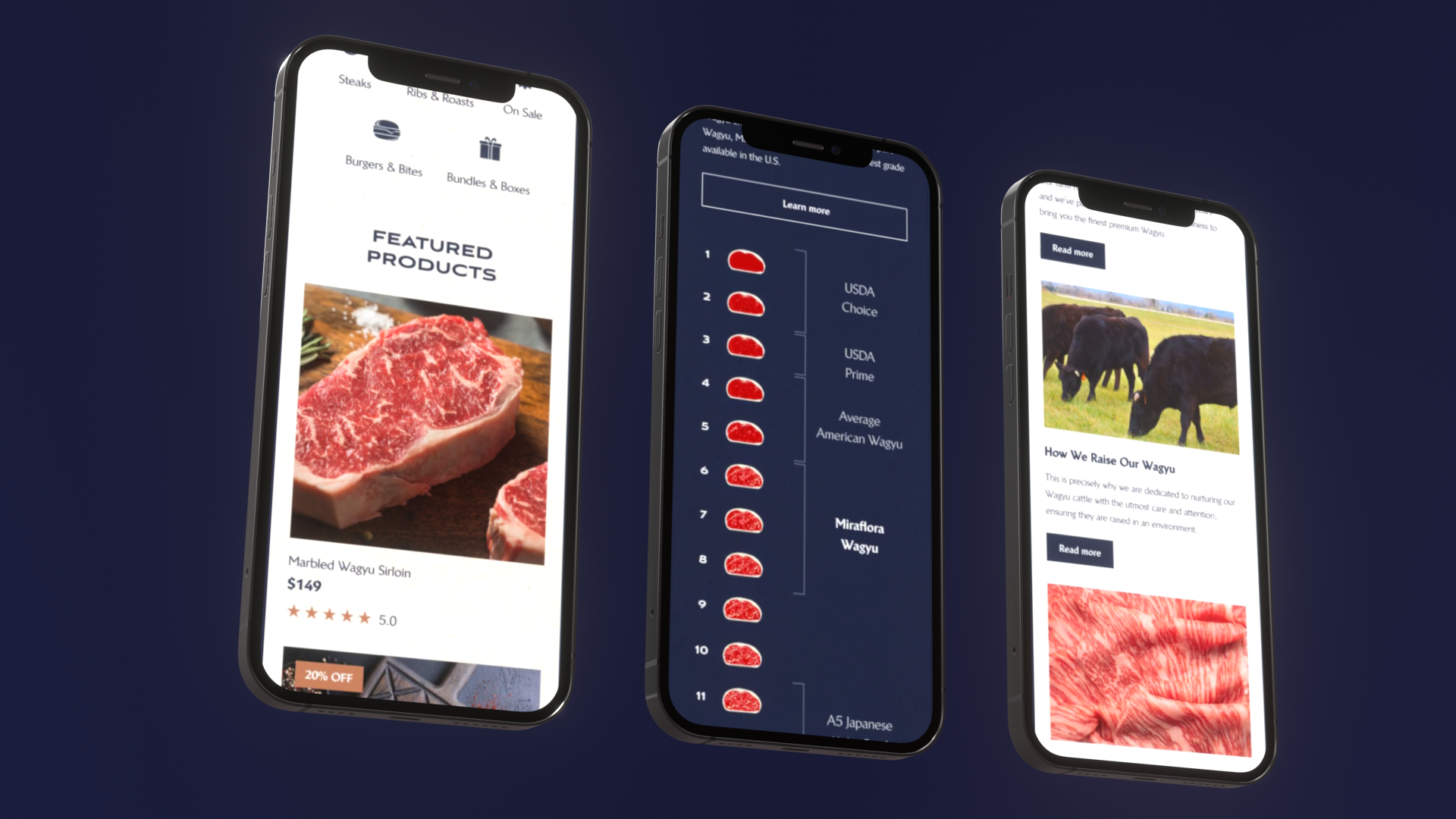

This is for procurement and product teams buying:

- Custom software development

- SaaS development with integrations

- AI systems such as RAG search, copilots, agents, and internal knowledge assistants

If you are evaluating multiple vendors, or even deciding between internal build vs external delivery, the same structure helps.

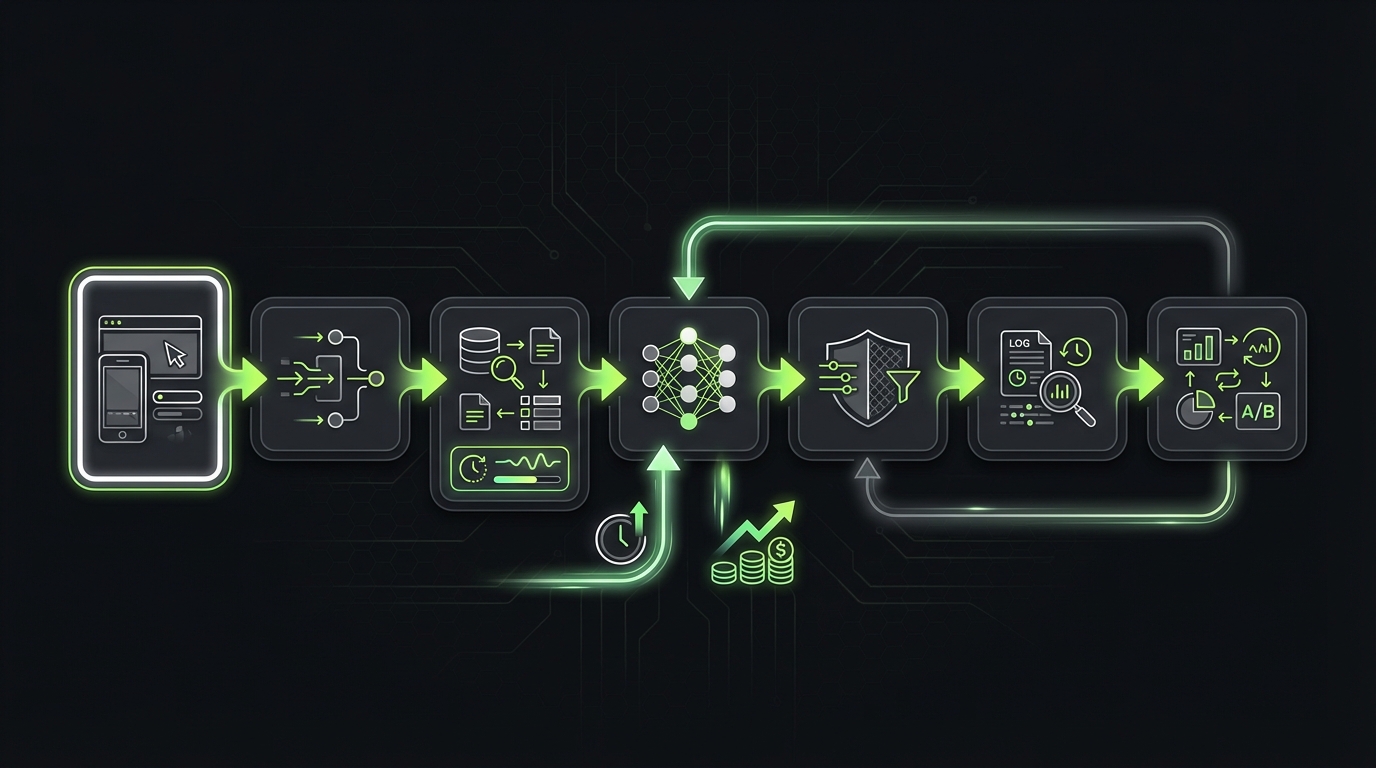

_> Delivery proof points

Concrete numbers that make RFP answers easier to validate

RFP build process

_> A simple sequence that keeps scope and proof aligned

→ Scroll to see all steps

Write an RFP that finds delivery

A good RFP makes it hard to hide behind generic process talk. It asks for decisions, constraints, and proof.

Here is the pattern that works in practice:

- Define the outcome in measurable terms.

- Define the constraints that shape delivery.

- Ask for the plan with roles, milestones, and risks.

- Ask for proof tied to those risks.

Key Stat: In our delivery work, the biggest schedule slips rarely come from coding speed. They come from unclear scope boundaries, slow decision loops, and late discovery of non functional requirements.

Common RFP failure modes

These are the red flags that produce nice responses and bad projects:

- Requirements are a feature wishlist, not a prioritized backlog

- No definition of done for quality, security, and release readiness

- AI scope is described as prompts and model choice, not systems work

- Timeline is a single date with no milestones or acceptance gates

- Evaluation is subjective, so the loudest stakeholder wins

Mitigation is simple. Make vendors answer with artifacts you can inspect.

Copy ready RFP sections and prompts

Use these sections verbatim. Vendors should respond in the same order.

1) Background and goals

- What problem are we solving?

- Who are the users?

- What does success look like in 90 days and 12 months?

2) Scope and non scope

- In scope: list 5 to 10 items

- Out of scope: list 5 items

- Assumptions vendors may not make

3) Current state

- Systems involved and owners

- Data sources and access constraints

- Known tech debt and constraints

4) Requirements and acceptance

- Functional requirements by workflow

- Non functional requirements: security, quality, delivery

- Acceptance criteria and test expectations

5) Delivery approach

- Team composition by role and seniority

- How you run discovery, build, QA, release

- How you handle change requests

6) AI specific section (if applicable)

- AI RFP questions (see below)

- Evaluation plan, monitoring, and guardrails

7) Timeline and milestones

- Proposed plan with dates

- Dependencies and what you need from us

8) Proof and references

- Case studies with metrics

- Reference calls

- Security and compliance evidence

9) Commercials

- Pricing model and assumptions

- Rate card if relevant

- What is included vs out of scope

10) Response format

- Page limits

- Required attachments

- Deadline and Q and A process

Insight: The best prompt in any RFP is: "Show the artifact." Plans are cheap. Artifacts cost time, so only teams that actually do the work can provide them.

Copy ready proof requests

Use these as mandatory attachmentsRequire these items in every response:

- Case studies: 2 to 3 that match your domain and constraints. Each must include timeline, team size, and one measurable outcome.

- References: 2 reference calls, one from a project that had issues.

- Standards and policies: secure SDLC checklist, incident response outline, data handling policy.

- Sample artifacts: release checklist, sprint board snapshot, runbook excerpt.

- AI specific (if applicable): evaluation plan, example test set, monitoring and alerting plan.

If a vendor pushes back, ask why. Sometimes it is confidentiality. That is fine. Redaction is normal. Refusal is the signal.

What to demand in responses

_> These items separate delivery teams from slide decks

Named team and seniority

Ask who will actually ship the work, not who will sell it. Require roles, seniority, and time allocation.

Architecture and tradeoffs

A diagram plus 5 tradeoffs beats 20 pages of generic text. Look for failure modes and constraints.

QA and release gates

Require a definition of done, test strategy, and release checklist. No checklist, no confidence.

Security posture evidence

Policies, scans, and a redacted threat model. Security is not a paragraph, it is a workflow.

AI evaluation plan

Ask how they measure task success, detect drift, and handle low confidence. This is the difference between demo and prod.

Change control mechanics

How do they handle scope changes without drama? Look for a clear process and pricing assumptions.

Requirements library: security, quality, delivery

This is the reusable part. Keep it as a library and pull it into each RFP.

Score before you read

Reduce internal debateSet the rubric and weights before responses arrive, or you will score whoever tells the best story. Keep price under control: if price exceeds 30%, you are signaling delivery quality is optional. Practical setup:

- Use a 1 to 5 scale where 4 to 5 requires repeatable artifacts.

- Add two hard gates: Security baseline and Proof (references or relevant case studies).

- Run a 30 to 60 minute calibration: score one response together and write down what a "3" vs "4" means.

What to measure (if you want to validate the rubric): post project variance in scope, timeline slip drivers, and defect rate after launch.

The goal is not to over specify. It is to make hidden work visible early.

Security requirements (copy ready)

Ask vendors to answer each item with: approach, tooling, and proof.

- Secure SDLC: Describe how security is built into your dev workflow.

- Access control: How do you manage least privilege across environments?

- Secrets management: Where do secrets live? How are they rotated?

- Data handling: Describe data classification and handling for PII.

- Encryption: At rest and in transit. State defaults and exceptions.

- Vulnerability management: SCA, SAST, dependency scanning cadence.

- Incident response: Who is on call? What is the escalation path?

- Pen test readiness: What is your process to remediate findings?

Mandatory evidence you can request:

- A recent security policy excerpt or SDLC checklist

- Example threat model from a past project (redacted)

- Sample dependency scan report (redacted)

Insight: If a vendor cannot explain how they manage secrets and access, you are taking on operational risk, not just product risk.

Quality requirements (copy ready)

Quality needs a definition of done. Otherwise it becomes "we tested it".

- Test strategy: Unit, integration, end to end. What is automated?

- Coverage expectations: Define targets or justify why not.

- Release gates: What blocks a release?

- Performance budgets: Latency, throughput, and load testing approach.

- Observability: Logging, metrics, tracing. What ships by default?

- AI QA (if applicable): How do you test systems that can change daily?

If you are buying AI features, include this:

- Evaluation datasets: who creates them and how they are maintained

- Regression testing: how you detect model drift and retrieval drift

- Failure handling: what happens when confidence is low or sources are missing

Key Stat: In our work on AI systems, teams often ship a prototype in days, then spend weeks making it safe and predictable. Put evaluation and guardrails in the RFP, not in a post launch panic.

Delivery requirements (copy ready)

Delivery is where most vendor comparisons break down. Make it explicit.

- Cadence: Sprint length, demo frequency, and release frequency

- Ownership: Who is the delivery lead? Who owns architecture decisions?

- Backlog hygiene: How do you write, size, and refine stories?

- Documentation: What is documented, where, and when?

- Handover: What artifacts do we get if we end the engagement?

- Time zone overlap: Minimum overlap hours and escalation path

Proof you can require:

- Sample sprint board screenshots (redacted)

- Example release checklist

- Example runbook and on call rotation

What this RFP structure buys you

Fewer surprises after signature

Security, QA, and delivery expectations are explicit, so hidden work shows up in the proposal, not in sprint 6.

Faster vendor selection

A rubric and proof gates reduce debate. You can move from responses to interviews in days, not weeks.

Better AI outcomes

AI RFP questions focus on evaluation, drift, and failure handling, which are the real sources of risk in production.

Cleaner handover and ownership

Artifact based delivery makes it easier for your internal team to take over, audit, or switch vendors later.

AI RFP questions that matter

Most AI RFP questions are either too vague or too model focused. Vendors answer with buzzwords and you learn nothing.

Reuse a requirements library

Make hidden work visibleKeep a pull in library for Security, Quality, Delivery so every RFP covers non functional work that usually appears late. Request both the requirement and the evidence:

- Security: secure SDLC, access controls, threat modeling. Evidence: policy excerpt, sample scan output, threat model.

- Quality: test strategy, release gates, observability. Evidence: test plan, sample report, dashboard screenshot.

- Delivery: ownership, decision loop, release cadence. Evidence: sprint artifacts, definition of done, incident process.

Tradeoff: more upfront work for vendors. Mitigation: mark items as baseline vs optional so you do not over specify.

Ask questions that force systems thinking: data, evaluation, latency, cost, and failure modes.

Core AI RFP questions (copy ready)

Use these as a checklist. Ask vendors to answer with concrete examples.

Problem framing

- What is the user job to be done? What is the fallback when AI fails?

- What does success mean? Task success rate, time saved, deflection rate?

Data and retrieval

- What data sources are required? Who owns access and permissions?

- How do you handle stale data and conflicting sources?

- How do you evaluate retrieval quality, not just model output?

Evaluation and QA

- What is your evaluation plan before launch?

- How do you prevent regressions when prompts, models, or indexes change?

- What tooling do you use for tracing and debugging?

Safety and compliance

- How do you prevent sensitive data leakage?

- How do you handle prompt injection and malicious inputs?

- What is your policy on training data and vendor data retention?

Latency and cost

- What is the latency budget per request and how do you meet it?

- What is the cost per 1,000 requests estimate and how do you monitor it?

Operations

- What do you log? What do you not log?

- What alerts do you set up? What is the on call plan?

Insight: The best AI vendors talk about evaluation datasets, drift, and failure handling without being asked. Your RFP should make that the minimum bar.

AI deliverables to require

Ask for deliverables, not promises:

- A written evaluation plan with metrics and thresholds

- A small gold set of test cases with expected behavior

- A tracing and logging plan that supports debugging

- A rollout plan: internal beta, limited release, full release

If they cannot provide these, you are likely buying a demo.

Sample timeline options

Pick based on risk and complexityUse one of these timeline shapes. The key is the proof gate.

Fast track (2 to 4 weeks to selection)

- Week 1: RFP issued, Q and A window

- Week 2: Responses due, rubric scoring

- Week 3: Top 2 interviews, technical deep dive

- Week 4: Reference calls, final selection

Standard (4 to 6 weeks to selection)

- Add a paid discovery sprint with the top vendor

- Require a draft backlog, architecture sketch, and risk register

High risk (6 to 10 weeks to selection)

- Add security review, data access review, and AI evaluation plan review

- Run a small proof of capability build with acceptance tests

Evaluation and scoring rubric

You need a vendor selection rubric before you read responses. Otherwise you score based on who wrote the best story.

Force proof, not prose

Select for deliveryMost RFP failures start when vendors can win with writing. Require proof tied to risk, not generic process talk. Use this sequence:

- Outcome: measurable success (ex: "reduce onboarding time from X to Y", "p95 latency under Z ms").

- Constraints: security baseline, integration limits, decision makers, release windows.

- Plan: named roles, milestones, dependencies, risk register.

- Proof: artifacts that match the plan (sample sprint board, release checklist, postmortem, QA report).

What fails: polished estimates with no scope boundaries. Mitigation: ask for "what you will not do" and a change control approach before pricing.

Below is a practical rubric that works for software development and AI systems. Adjust weights based on risk.

Insight: If price is more than 30% of the score, you are signaling that delivery quality is optional.

Rubric table

| Category | Weight | What good looks like | Evidence to request |

|---|---|---|---|

| Delivery capability | 25 | Clear plan, named roles, realistic milestones | Sample sprint artifacts, release checklist |

| Technical approach | 20 | Architecture fits constraints, handles scale and failure | Architecture diagram, tradeoff notes |

| Quality and QA | 15 | Test strategy, release gates, observability | Test plan, sample reports |

| Security and compliance | 15 | Compliance by design, access controls, secure SDLC | Policies, scans, threat model |

| AI readiness (if applicable) | 15 | Evaluation, drift monitoring, guardrails | Eval plan, dataset approach |

| Commercials | 10 | Transparent assumptions, change control | Rate card, scope boundaries |

Scoring rules

Use a 1 to 5 scale:

- Missing or hand wavy

- Present but unproven

- Solid and plausible

- Proven with strong evidence

- Proven and repeatable with artifacts

Then add two gates:

- Gate A (security): fail if they cannot meet baseline security requirements

- Gate B (proof): fail if they cannot provide references or relevant case studies

Practical tip: Run a calibration session. Score one response together as a team. Align on what a 3 vs 4 means.

Interview script for the top 2 vendors

After scoring, do a structured interview. Same questions. Same time box.

- Walk us through a recent project that went off track. What did you do?

- Show us a real backlog and how you refined it.

- Show us your definition of done and release checklist.

- For AI: show us how you evaluate and trace outputs.

- Who will actually be on the team? What is the seniority mix?

Example: In our work on large builds like a virtual event platform delivered in 9 months for ExpoDubai 2020, the difference was not a fancy plan. It was disciplined milestones, clear ownership, and fast feedback loops across many stakeholders.

Conclusion

A strong RFP does not try to predict every feature. It forces clarity on delivery.

If you only do three things, do these:

- Ask for artifacts, not narratives. Backlogs, checklists, evaluation plans.

- Use a vendor selection rubric. Decide weights before reading responses.

- Request proof early. Case studies with metrics, references, and standards.

Insight: The fastest way to de risk a vendor is to make them show how they work, not how they write.

Next steps you can run this week:

- Copy the RFP prompts from this guide into your doc.

- Paste in the requirements library and delete what you do not need.

- Add the scoring rubric table and align internally on weights.

- Set a timeline with a proof gate before final selection.

If you want a sanity check, run a one hour internal review with engineering, security, and procurement. The goal is simple: no hidden work after signature.