Introduction

Multi vendor programs fail in boring ways.

Not because teams cannot code. Because nobody can answer simple questions with confidence:

- Who can approve a design change?

- What happens when Vendor A blocks Vendor B?

- Which risks are real, and which are noise?

- If an auditor asks for evidence, where is it?

Delivery governance is the system that makes those answers repeatable. In regulated industries, it is also your insurance policy. GDPR, HIPAA, and PCI DSS do not care that the program had “a lot going on.” They care that you can prove control.

Insight: Multi vendor delivery governance is less about meetings and more about decision latency. If decisions take 10 days, your schedule will slip even if every sprint “hits velocity.”

This guide is written for consulting engineering leaders running enterprise programs with multiple vendors and internal teams. It focuses on observable mechanics: cadences, escalation paths, architecture sign off, dependency management, and audit ready documentation.

You will also get program governance templates you can copy into your operating model.

What good looks like

You know governance is working when:

- Escalations are rare, but fast when needed

- Architecture decisions are reversible when possible, and documented when not

- Dependencies are visible 2 to 6 weeks ahead, not discovered in standups

- Executive stakeholders get one page they can trust

- Audit evidence is produced from normal delivery work, not a scramble at the end

Example: In our delivery work across 360+ projects since 2012, the programs that shipped predictably were not the ones with the most process. They were the ones with the clearest decision rights and the smallest “unknown owner” surface area.

_> Delivery proof points

What we see in real programs

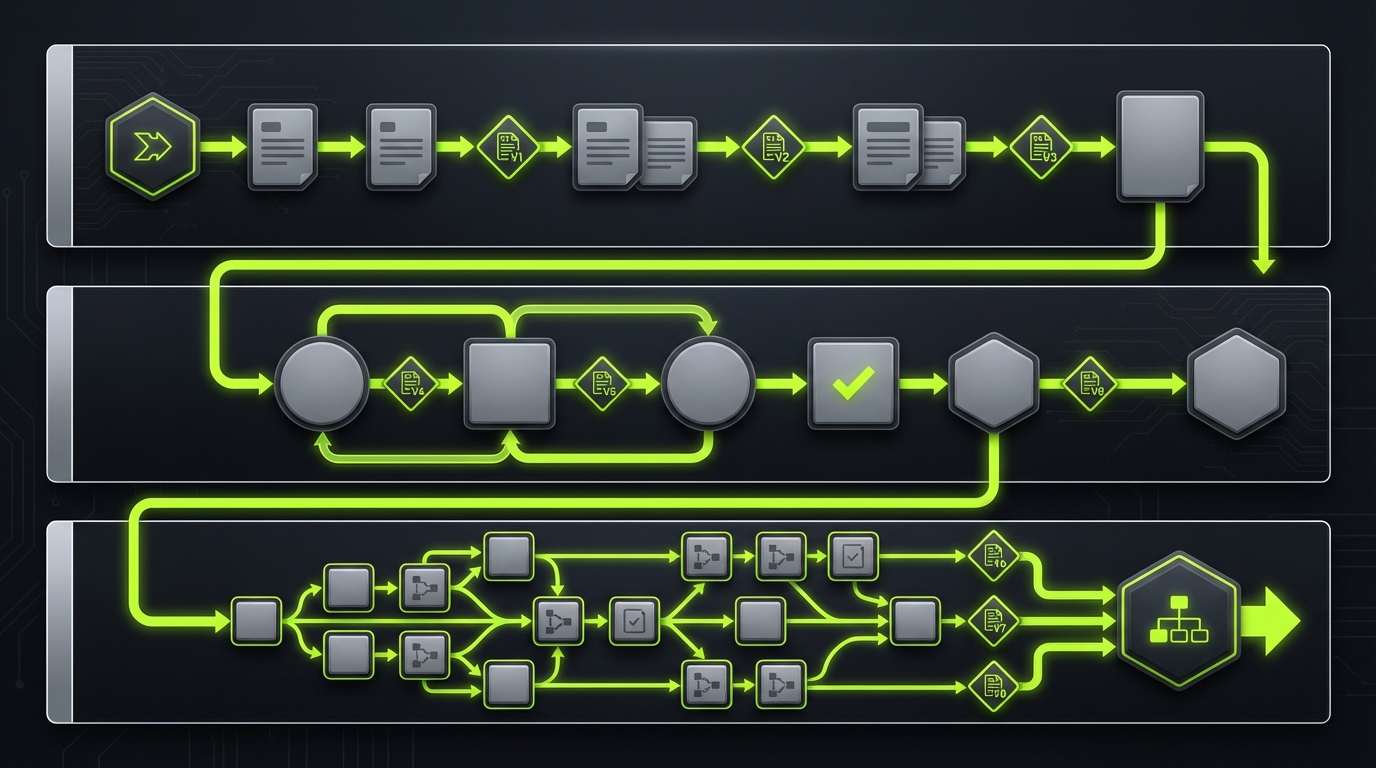

Set up governance in 10 days

_> A practical rollout plan for consulting leaders

→ Scroll to see all steps

Why multi vendor governance breaks

Most programs start with a reasonable plan. Then reality shows up: different incentives, different definitions of “done,” and different risk tolerance.

Common failure modes in vendor management for software projects:

- Competing backlogs. Each vendor optimizes for its contract, not the end to end outcome.

- Invisible dependencies. Integration work is treated as “later,” then becomes “urgent.”

- Design drift. Teams implement local optimizations that conflict at the system level.

- Escalation theater. Problems bounce between steering committees because nobody owns the decision.

- Audit panic. Evidence is requested after the fact, when context is gone.

Key Stat (observation): In regulated programs, the most expensive delays often come from rework triggered by late security and compliance feedback. If you cannot show a signed decision trail, you will repeat the same debate.

The hidden cost of unclear decision rights

If you want one metric that predicts chaos, track decision lead time.

Measure:

- Time from “question raised” to “decision recorded”

- Number of decision makers involved

- Number of reversals

When decision lead time grows, teams compensate with:

- Over documentation that nobody reads

- Side agreements between vendors

- Shadow architecture and duplicated components

None of that shows up in sprint metrics. It shows up in missed milestones and brittle releases.

Regulated industries add a second program

In finance, healthcare, and defense, you are running two programs at once:

- Product delivery

- Evidence delivery

If evidence is not baked into the delivery workflow, you will pay for it later with:

- Delayed go live due to security sign off

- Repeated penetration tests because scope changed

- Risk exceptions that expire mid program

A practical stance: treat governance artifacts as production assets. Version them. Review them. Retire them.

Program governance templates pack

Copy and paste startersIncludes:

- Weekly delivery governance agenda

- Biweekly architecture review agenda

- One page weekly exec brief format

- Dependency register fields

- ADR template

- Acceptance criteria examples

Use it as a baseline, then tune it to your org’s risk profile and release cadence.

Escalation decision log

Keep it boring and fastA simple table you can keep in your program repo:

| Date | Trigger | Owner | Decision needed | Options | Decision | Due date | Status |

|---|

Rules:

- No escalation without a decision statement.

- No decision without an owner and due date.

- Close the loop in the next weekly exec brief.

Cadences and escalation paths

Governance cadences should reduce uncertainty, not create calendar debt. Keep them few, predictable, and tied to decisions.

Dependencies With Owners

Stop managing blockers in SlackTreat dependencies as backlog items so they have owners, dates, and history. Minimum fields:

- Owner (one person)

- Consumer and provider teams

- Needed by date (tied to a milestone)

- Interface or artifact (API spec, schema, environment)

- Acceptance criteria

- Risk rating (low, medium, high)

Weekly checklist: what changed, what moved into next 2 weeks, what is blocked and why, missing cross vendor integration tests. What to measure (hypothesis): dependency lead time and reopen rate. If reopen rate is high, acceptance criteria is vague or interface contracts are drifting.

Here is a cadence set that works for multi vendor delivery governance:

- Daily cross vendor integration sync (15 minutes). Only for teams touching shared interfaces.

- Weekly delivery governance (60 minutes). Decisions, risks, dependencies, scope moves.

- Biweekly architecture review (60 to 90 minutes). Design authority decisions and exceptions.

- Monthly steering (45 minutes). Budget, timeline, outcome metrics, major risks.

Insight: If the weekly governance meeting becomes a status readout, you have already lost. Status belongs in async reporting. Meetings are for decisions.

Escalation path that actually works

Define escalation as a product feature: clear inputs, clear outputs.

Escalation triggers (pick a small set):

- A dependency will miss a milestone by more than 5 business days

- A security or compliance requirement is at risk

- A vendor cannot meet acceptance criteria after two iterations

- A design decision impacts more than one vendor team

Escalation levels:

- Level 1: Delivery leads. Resolve within 24 hours.

- Level 2: Program director and design authority. Resolve within 48 hours.

- Level 3: Steering group. Resolve within 5 business days.

Rules that keep it clean:

- Every escalation has a single owner.

- Every escalation ends with a recorded decision, not a “next discussion.”

- If a decision cannot be made, record what data is needed and who will produce it.

Callout: The fastest programs treat escalations as time boxed decisions, not as blame assignments.

Efficient meeting templates

Use templates that force preparation and decision making.

Weekly delivery governance agenda (60 minutes):

- 5 min: Changes since last week (scope, timeline, staffing)

- 10 min: Dependency heatmap review (top 5 only)

- 15 min: Decision log review (new decisions needed)

- 15 min: Risks and mitigations (top 3 only)

- 10 min: Escalations and owners

- 5 min: Confirm next week’s critical path

Biweekly architecture review agenda (60 to 90 minutes):

- 10 min: New architecture decision records proposed

- 30 min: Deep dive on 1 to 2 decisions (tradeoffs, security, ops)

- 10 min: Exceptions and technical debt register

- 10 min: Cross vendor interface changes

- 10 min: Confirm sign off, owners, and dates

If you want a simple rule: no pre read, no meeting. Put the pre read in the invite and require comments 24 hours before.

Decision speed

Short, recorded, owned

Reduce decision lead time with clear authority and tight agendas.

Compliance by design

Evidence from day one

Bake GDPR, HIPAA, and PCI DSS controls into definitions of done and artifacts.

Self directed teams

Clear interfaces, fewer meetings

Vendors move faster when contracts, acceptance criteria, and escalation paths are explicit.

Core controls to implement

_> Minimum viable multi vendor delivery governance

Decision log

Every cross vendor decision is recorded, owned, and time boxed.

Design authority

Small group with clear accountability for shared architecture and exceptions.

Interface contracts

Versioned APIs and schemas with backward compatibility rules.

Dependency heatmap

Weekly view of what blocks what, with dates and owners.

Release readiness checks

Operational, security, and quality gates tied to acceptance criteria.

Audit evidence trail

Docs and reports generated from normal delivery work, not end of program cleanup.

Design authority and architecture sign off

Multi vendor programs need a design authority. Not a committee that debates. A small group that can decide.

Cadences That Create Decisions

Fewer meetings, sharper outputsUse meetings only when they produce a decision or unblock work. Everything else goes async. Cadence set that works:

- Daily integration sync (15 min): only teams touching shared interfaces.

- Weekly delivery governance (60 min): decisions, risks, dependencies, scope moves.

- Biweekly architecture review (60 to 90 min): design authority calls and exceptions.

- Monthly steering (45 min): budget, timeline, outcome metrics, top risks.

Anti pattern: Weekly governance becomes a status readout. Mitigation: require pre read status in a standard template; meeting agenda is only “decide, escalate, or assign.” Measure: % of agenda items with a recorded decision.

Design authority responsibilities:

- Approve architecture decision records (ADRs)

- Own non functional requirements (security, performance, resilience)

- Control shared interfaces and reference implementations

- Approve exceptions with expiry dates

Insight: Architecture sign off is not a one time gate. It is a continuous control loop. Every interface change is an architecture event.

RACI for design decisions

Keep roles explicit. Here is a baseline RACI you can adapt.

| Decision type | Responsible | Accountable | Consulted | Informed |

|---|---|---|---|---|

| Shared API contract changes | Owning vendor tech lead | Design authority chair | Other vendor leads, security | Program stakeholders |

| Data model changes | Data lead | Design authority | Compliance, analytics | All teams |

| Security control selection | Security lead | CISO delegate or security owner | Vendors, platform | Steering |

| Release readiness | Release manager | Program director | QA, ops, vendors | Business owners |

If “Accountable” is unclear, decisions will drift to whoever speaks loudest in the room.

Architecture decision record pattern

Keep ADRs short. One page is enough.

ADR template:

- Title

- Status (proposed, accepted, superseded)

- Context (what changed)

- Decision (what we will do)

- Alternatives considered (2 to 3)

- Consequences (good and bad)

- Security and compliance notes (GDPR, HIPAA, PCI DSS as applicable)

- Rollout plan and owners

For regulated programs, add:

- Data classification impact

- Logging and retention impact

- Access control impact

Example: When building AI and data heavy features, we have seen teams ship fast and then struggle to explain regressions. ADRs help because you can tie behavior changes to model versions, retrieval index updates, and prompt changes. That is not theory. It shows up in support tickets that say “it got worse.”

What you get when it works

Fewer surprises

Dependencies and risks surface early, when you can still change course cheaply.

Cleaner vendor boundaries

Teams stop debating scope because interfaces and acceptance criteria are explicit.

Faster sign offs

Architecture and security decisions are made in days, not weeks, with a documented trail.

Audit confidence

You can answer who approved what, when, and why without a scramble.

Dependencies, acceptance criteria, and audit ready reporting

This is where multi vendor delivery governance becomes real. Dependencies and acceptance criteria are the difference between “we finished our sprint” and “the program ships.”

Decision Latency Kills Delivery

Governance as a control systemProblem: Multi vendor programs slip even when teams “hit velocity” because decisions take too long. What to do:

- Track decision latency like a metric: request date → decision date → implementation date.

- Assign a single decision owner per decision type (design change, scope move, risk acceptance). No shared ownership.

- Define a default path: if no decision in X days, escalate to Y. Put it in writing.

What fails in practice: Steering committees that only “review” and never decide. Mitigation: require a recorded outcome for every decision (approve, reject, defer with date).

Dependency management across vendors

Treat dependencies as first class backlog items.

Minimum fields for each dependency:

- Owner (single person, not a team)

- Consumer and provider teams

- Needed by date (tied to a milestone)

- Interface or artifact (API spec, schema, environment)

- Acceptance criteria

- Risk rating (low, medium, high)

Dependency review checklist (weekly):

- What changed since last week?

- Which dependencies moved into the next 2 weeks?

- Which dependencies are blocked, and why?

- Are we missing any cross vendor integration tests?

Insight: If you do not have a dependency board, you will manage dependencies in Slack. Slack does not have owners, dates, or history.

Acceptance criteria patterns

In multi vendor programs, vague acceptance criteria are a contract loophole.

Use patterns that force testability:

- Interface contract: “Given schema v3, when client sends X, API returns Y within Z ms at P95.”

- Security control: “All endpoints handling card data enforce mTLS and are covered by automated SAST and dependency scanning in CI.”

- Data handling: “PII fields are encrypted at rest, masked in logs, and deleted within retention policy.”

- Operational readiness: “Runbook exists, on call rotation defined, and synthetic checks cover top 5 user journeys.”

Example acceptance criteria (copy ready):

Given: User authentication via OIDC When: Token expires Then: Client receives 401 with error code AUTH_001 And: Refresh flow succeeds within 2 seconds P95 And: Event is logged without PII And: Test coverage includes 1 integration test and 2 negative casesWeekly exec brief format

Execs do not need sprint details. They need confidence.

One page weekly exec brief:

- Outcomes shipped this week (3 bullets)

- Outcomes at risk (3 bullets)

- Milestones: on track, watch, off track

- Top dependencies (owner, date, risk)

- Top decisions needed (who decides, by when)

- Compliance status (open findings, upcoming reviews)

- Budget and capacity notes (only deltas)

Key Stat (hypothesis to measure): If you keep the exec brief stable for 6 weeks, escalation volume drops because stakeholders stop asking for ad hoc updates. Measure: number of inbound status requests per week.

Audit ready documentation and reporting

Audit ready does not mean “more docs.” It means the right set, kept current.

Audit ready documentation set:

- System context diagram and data flow diagram

- ADR log with sign offs

- Threat model and security controls mapping

- Data processing inventory (GDPR) or HIPAA safeguards mapping

- Access control matrix and role definitions

- Change log and release notes

- Test evidence: CI logs, security scans, penetration test reports

- Incident response runbooks and postmortems

Reporting practices that hold up:

- Link every risk to an owner and mitigation

- Link every mitigation to an artifact (ADR, ticket, control)

- Version everything in the same repo or a controlled system

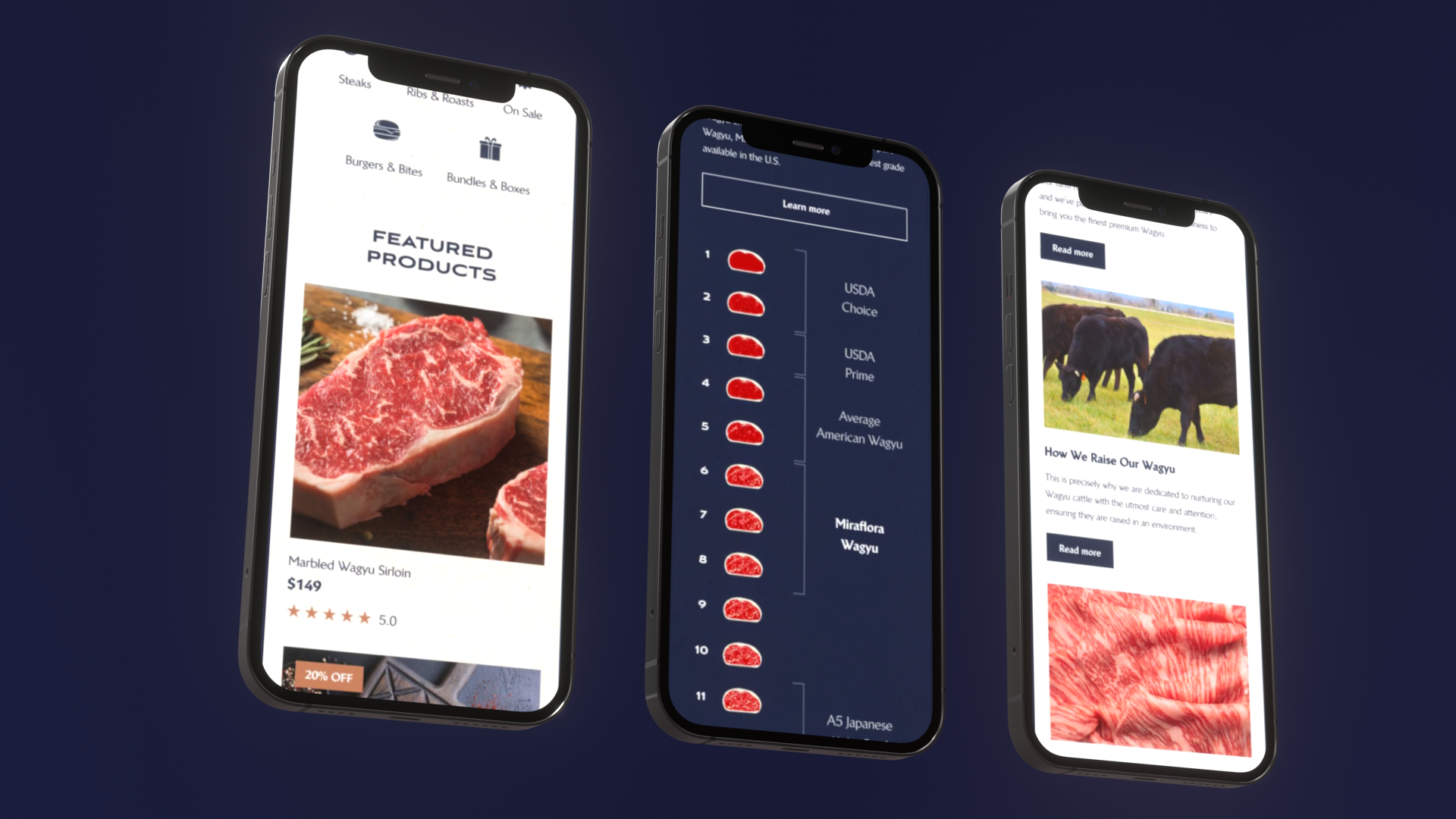

Example: In fast timelines like the 4 week Miraflora Wagyu Shopify delivery, asynchronous communication and clear decision records are what kept feedback loops moving across time zones. Speed came from clarity, not heroics.

Compare reporting options

Pick a reporting stack that matches your compliance needs.

| Option | Good for | Risks | When to use |

|---|---|---|---|

| Spreadsheet based register | Small programs, short timelines | Version drift, weak audit trail | Proof of concept or under 8 weeks |

| Ticket system as source of truth | Most enterprise programs | Requires discipline in fields | Default choice for multi vendor programs |

| GRC tool integration | Regulated, audit heavy orgs | Setup overhead, process rigidity | When audit requirements drive delivery |

Whatever you choose, enforce one rule: no duplicate sources of truth.

What to measure weekly

Avoid vanity metrics. Track signals that predict delivery risk.

Recommended metrics:

- Decision lead time (days)

- Dependency slippage count (week over week)

- Defect escape rate (prod defects per release)

- Security findings aging (days open)

- Change failure rate (percent of releases needing rollback or hotfix)

- Environment readiness (percent of planned test windows actually usable)

If you run AI features, add:

- Cost per successful request

- Drift indicators (evaluation score trend)

- Hallucination rate on a fixed test set

These are not perfect. They are actionable.

Conclusion

Multi vendor delivery governance is not about controlling people. It is about controlling uncertainty.

If you want a practical starting point, do these in the next two weeks:

- Set a weekly governance cadence with a decision focused agenda.

- Establish a design authority and start writing ADRs for shared interfaces.

- Stand up a dependency board with owners and needed by dates.

- Standardize acceptance criteria patterns across vendors.

- Build an audit ready documentation set that is produced by normal delivery work.

Final thought: The best governance feels boring. That is the point. Boring delivery is how regulated enterprises ship on time.

Next steps you can assign today:

- Nominate escalation owners and publish triggers

- Create the one page weekly exec brief

- Schedule the first architecture review with a clear decision list

- Audit your current docs against the audit ready set and close the top 5 gaps

If you do this well, vendor management for software projects becomes predictable. And predictable is what stakeholders pay for.