Introduction

Investors don’t fund “a product.” They fund a de risked plan.

An investor ready MVP is not the smallest thing you can build. It’s the smallest thing that proves:

- A real user has a real problem

- Your solution works in a believable way

- You can ship and measure outcomes

- The next milestones are clear and cheap enough to reach

If you scope the wrong MVP, you get stuck in demo theater. Pretty screens. No proof. No baseline metrics. Then fundraising turns into opinions.

Insight: A good MVP scope is a set of decisions you can defend in one slide: who it’s for, what it does, what it does not do, and what you’ll measure.

This guide is written for founders who need to build an MVP fast without building the wrong thing fast. We’ll use concrete templates, backlog examples, and the same constraints we see in delivery work: limited time, limited budget, and high investor scrutiny.

What “fundable” looks like

In early stage meetings, the bar is usually not revenue. It’s clarity plus evidence.

Bring proof that you can execute:

- A demo that matches a real workflow

- A measurable activation event (not just page views)

- A simple funnel with drop off points you can explain

- A roadmap that starts with the demo and works backward

Proof point: Across 360+ projects delivered since 2012, the pattern is consistent: teams that instrument early make faster roadmap calls because they argue from data, not taste.

_> Delivery proof points

Numbers that matter when you promise speed

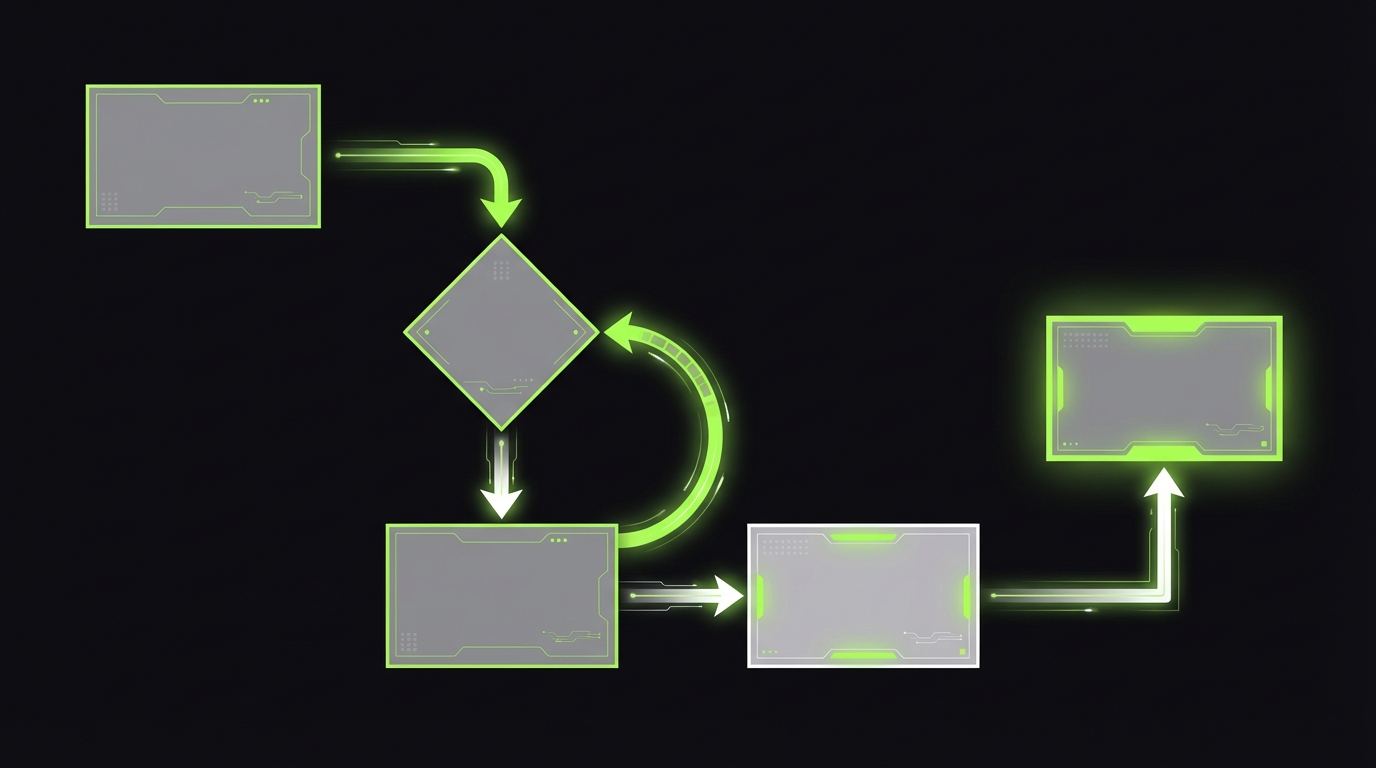

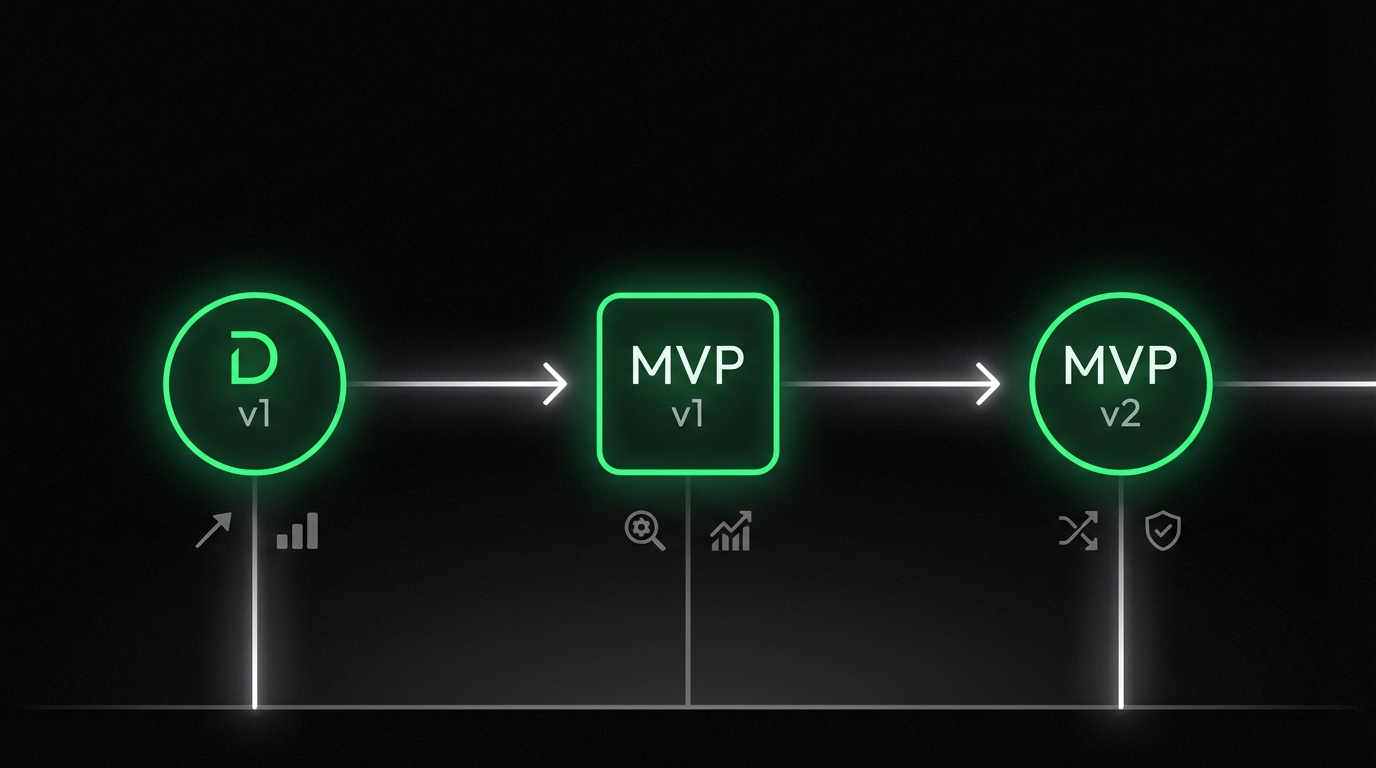

Define MVP, prototype, PoC

Founders and investors often use these words interchangeably. That’s where scope drift begins.

Here’s the clean way to separate them.

| Artifact | Goal | Audience | Must be production ready | Typical failure mode |

|---|---|---|---|---|

| PoC | Prove a technical claim is possible | Engineers, technical investors | No | Proves tech, proves nothing about users |

| Prototype | Prove UX and workflow are understandable | Users, stakeholders | No | Looks real, breaks under real data |

| MVP | Prove value with real users and measurable outcomes | Users, investors | Yes for the narrow slice | Too many features, no measurement |

A fundable MVP is often a prototype plus production constraints:

- Real auth and roles (even if simple)

- Real data flows (even if small)

- Auditability for key actions (who did what)

- Analytics baseline (what happened, not what you hope happened)

Insight: If you can’t put it in front of a user without apologizing, it’s not an MVP. It’s a prototype.

When each one is correct

Use a PoC when the core risk is technical. Example: can RAG retrieval stay accurate enough for a regulated workflow?

Use a prototype when the core risk is behavioral. Example: will operators follow this workflow, or will they bypass it?

Use an MVP when you’re ready to test value and retention. That means you need:

- A defined ICP and job to be done

- A single primary workflow

- A measurable success event

- A plan to get it into users’ hands within weeks

A quick investor litmus test

Ask yourself:

- Can I onboard a user without me on Zoom?

- Can I show a funnel from signup to success?

- Can I answer “what did users do last week” with numbers?

If the answer is no, you’re likely still in prototype land. That’s fine. Just don’t call it an investor ready MVP.

MVP scope guardrails

The rules that prevent scope drift- Backlog cap: 20 to 40 tickets

- Ticket size: 1 to 3 days each

- One workflow: no parallel feature tracks

- Every sprint ends in a demo: if it can’t be shown, it’s too big

- Instrumentation is a feature: events and dashboards are not optional

Use these guardrails in planning meetings. If a request breaks two guardrails, it goes to post MVP.

What makes an investor ready MVP

_> The minimum set that holds up under scrutiny

Narrow production slice

One workflow that works end to end with real data, not mocked screenshots.

Measurable activation

A named success event and a funnel you can show in a dashboard.

Risk controls

Basic security, audit logs for key actions, and guardrails where failure is expensive.

Demo first roadmap

Milestones planned backward from the demo, with 2 to 4 week increments and metric targets.

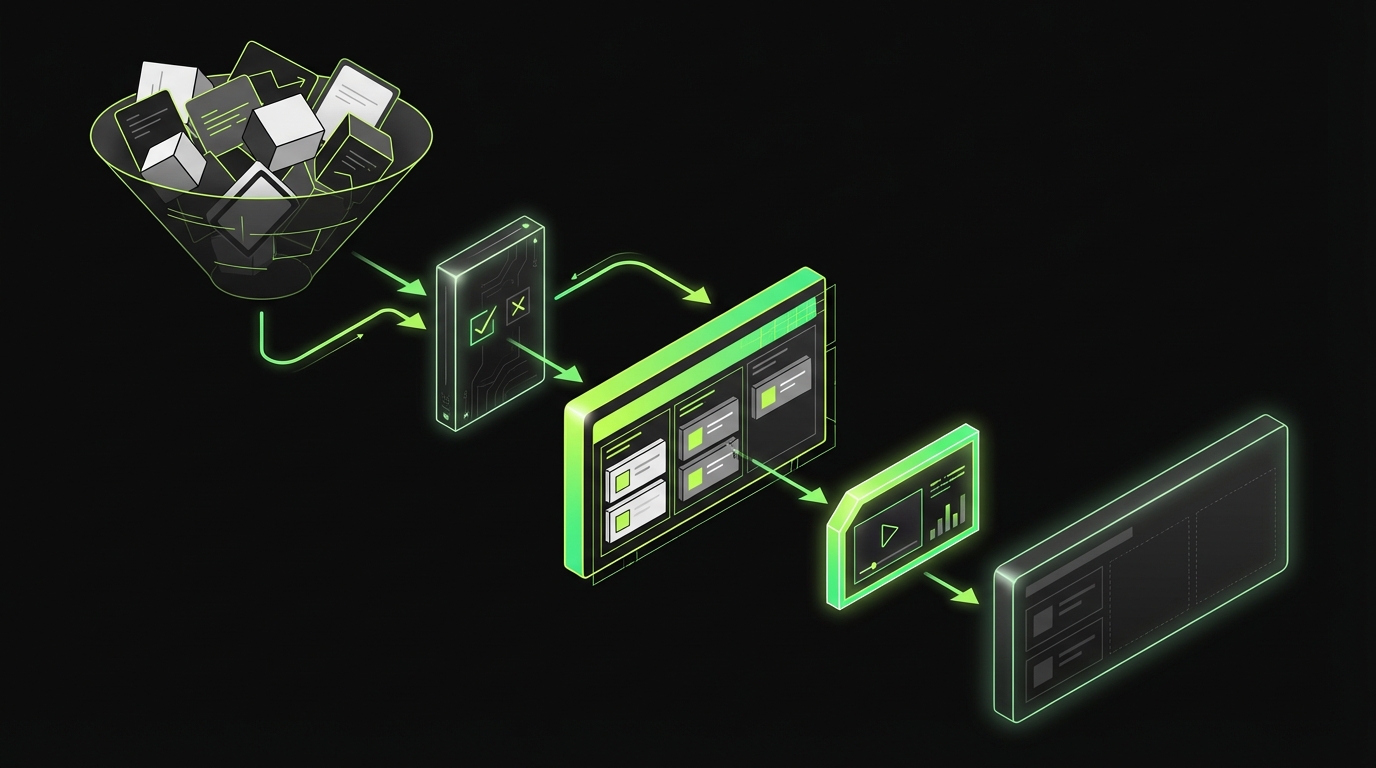

Ruthless feature triage

Most MVPs fail in planning, not in engineering. The scope grows because every feature sounds “small.”

Analytics Baseline, Week One

Proof beats opinionsInvestors fund what you can measure. Start with a baseline, not a perfect stack. Track four layers:

- Acquisition: where users came from

- Activation: first success event

- Engagement: repeat use of the core workflow

- Outcome: the result the user cares about

Pick one success event and build the demo around it (example: “completed core action”). Avoid vanity metrics: page views do not show value.

Minimal event taxonomy is enough: signed_up, onboarded, created_core_object, completed_core_action, invited_user, paid. In delivery work on AI products (example: LEDA, built in 10 weeks), we also had to instrument task success and quality signals, not just clicks. If you cannot answer drop off, time to first value, and activation counts yet, say so and show the timeline to get them.

Here’s the rule that holds up in investor conversations:

- One primary user

- One primary workflow

- One measurable outcome

Everything else is either:

- A dependency

- A risk control

- A nice to have

The triage questions

Use these questions in backlog grooming. If a feature fails two, it’s out.

- Does it directly enable the primary workflow?

- Does it reduce a known risk (security, compliance, payment failure)?

- Will it change a metric we can measure in the MVP window?

- Can we ship it in days, not weeks?

Insight: “We can add it later” is not a plan. A plan is a named milestone with a metric and a date.

Prioritization framework that stays honest

Use a simple scoring model you can explain on one slide:

- Impact: expected change in the one metric that matters (1 to 5)

- Confidence: evidence level (1 to 5)

- Effort: engineering days (1 to 5)

Score = (Impact x Confidence) / Effort

Then enforce a hard cap:

- MVP backlog: max 20 to 40 tickets

- Each ticket: 1 to 3 days

What to cut first

These features are common MVP traps:

- Multi tenant admin panels before you have tenant 2

- Custom dashboards before you know which metrics matter

- Complex permissions before you have a second role

- Mobile apps when a responsive web app would do

- Integrations before you’ve proven the workflow manually

Mitigation: keep a “later” backlog, but tie it to a trigger.

Example triggers:

- Add role based access control when you have 3+ distinct user roles in active use

- Add billing automation after the first 5 paying customers

- Add integration X after 10 weekly active users request it

Feature triage for AI products

AI MVPs need extra discipline because “smart” features are easy to demo and hard to maintain.

Keep the MVP AI scope to:

- One narrow task

- One evaluation dataset

- One acceptance threshold

If you can’t test it, you can’t promise it.

In our work on AI systems, the failure mode is predictable: teams measure usage, not outcomes. Adoption spikes, then support tickets climb because answers are wrong or inconsistent.

A better MVP question is: did the AI help the user finish the job faster or more accurately?

Benefits of scoping this way

Faster fundraising conversations

You answer investor questions with numbers and screenshots, not opinions.

Less rework

You avoid building feature clusters that don’t connect to the core workflow.

Clearer hiring plan

Once the baseline exists, you can justify who to hire next based on bottlenecks.

More predictable costs

Ticket caps and demo checkpoints reduce late surprises and scope creep.

Instrumentation investors trust

If you want investors to fund the next round of build, you need an analytics baseline from week one.

Ruthless Feature Triage

One user, one workflowInvestor safe scope = one primary user + one primary workflow + one measurable outcome. Everything else must justify itself as a dependency or risk control. Use these triage checks in backlog grooming. If a feature fails two, cut it:

- Enables the primary workflow

- Reduces a known risk (security, compliance, payment failure)

- Moves a metric you can measure in the MVP window

- Ships in days, not weeks

Keep planning honest with a simple score: (Impact × Confidence) / Effort. Then cap scope: 20 to 40 tickets, each 1 to 3 days. Failure mode: “small” features pile up and you ship a demo, not proof. Mitigation: name the “later” milestone with a metric and date.

Not a perfect data warehouse. A baseline.

The MVP analytics baseline

Track four layers:

- Acquisition: where users came from

- Activation: the first success event

- Engagement: repeat usage of the core workflow

- Outcome: the business result the user cares about

Insight: Page views are not traction. Investors look for activation and repeatable outcomes.

Define one success event

Pick a single event that means “the product worked.” Examples:

- SaaS: created first project and invited a teammate

- Marketplace: posted first listing or completed first booking

- AI tool: completed a task with a cited answer and user accepted it

Then build your demo around that event.

Minimal event taxonomy

Keep it simple. You can always add later.

- signed_up

- onboarded

- created_core_object

- completed_core_action

- invited_user

- paid

Here’s a simple example you can implement in any stack.

{

"event": "completed_core_action",

"user_id": "u_123",

"account_id": "a_456",

"properties": {

"workflow": "demo_flow_v1",

"time_to_complete_seconds": 142,

"result": "success"

},

"context": {

"app_version": "0.1.0",

"source": "founder_outreach"

},

"timestamp": "2026-02-26T10:15:00Z"

}Example: On LEDA, an AI powered exploratory data analysis tool built in 10 weeks, correctness and reliability were core constraints. For AI products like this, instrumentation must include task success and quality signals, not only clicks.

What investors will ask

Be ready to answer these with screenshots or a simple dashboard:

- How many users reached activation last week?

- Where do they drop off?

- What’s the median time to first value?

- What do retained users do differently?

If you can’t answer yet, say so. Then show the plan and the timeline to get the baseline.

AI specific quality metrics

If your MVP includes AI, add two more layers:

- Quality: task success rate, citation rate, human acceptance rate

- Risk and cost: latency, token spend, fallback rate

Start with a small evaluation set. Even 50 to 200 examples is enough to see regressions.

From our QA work on AI systems, the practical checks that matter early are:

- Hallucination rate on known questions

- Retrieval mismatch rate in RAG flows

- Instruction conflict cases

You don’t need perfect coverage. You need a baseline you can improve.

Investor demo checklist

What to have ready before the meeting- A 5 minute script with timestamps

- A live demo path with no dead ends

- A fallback video recording

- A single slide with baseline metrics

- A single slide with next milestone and budget

- A list of top 2 risks and how you’re testing them

If you only do one thing: show the activation funnel and the success event.

Demo first roadmap planning

A demo first roadmap is a reverse plan. You start with what the investor will see in 5 minutes, then work backward to the smallest build that makes it real.

MVP vs Prototype vs PoC

Stop scope drift earlyUse the right artifact for the right risk.

- PoC: proves a technical claim. Common failure: it works in isolation, but says nothing about users.

- Prototype: proves UX and workflow comprehension. Common failure: looks done, breaks with real data.

- MVP: proves value with real users and measurable outcomes. It needs a narrow production slice: basic auth, real data flow, audit trail for key actions, and an analytics baseline.

Rule of thumb: if you can’t put it in front of a user without apologizing (fake data, missing flows, “ignore this bug”), it’s a prototype. Mitigation: keep the surface area small, but make the core path production safe.

Build the demo spine

Write the demo as a sequence of user actions. Then turn each action into tickets.

A solid investor demo usually has:

- The problem in one sentence

- The user persona and context

- The core workflow end to end

- A measurable outcome

- The next milestone and what it unlocks

Insight: Investors fund momentum. Your roadmap should show what you can ship next in 2 to 4 week increments.

Demo first roadmap rules

Keep these constraints:

- No parallel feature tracks in MVP phase

- Every sprint ends with something demoable

- Every sprint has one metric target

Investor demo script outline

Use this outline. Don’t improvise.

- Hook: “We help [ICP] do [job] without [pain].”

- Setup: show the old way in 20 seconds

- Workflow: 3 to 5 steps, no detours

- Proof: show baseline metrics, even if small

- Risks: name the top 2 risks and how you’re testing them

- Ask: what you need to reach the next milestone (time, hires, budget)

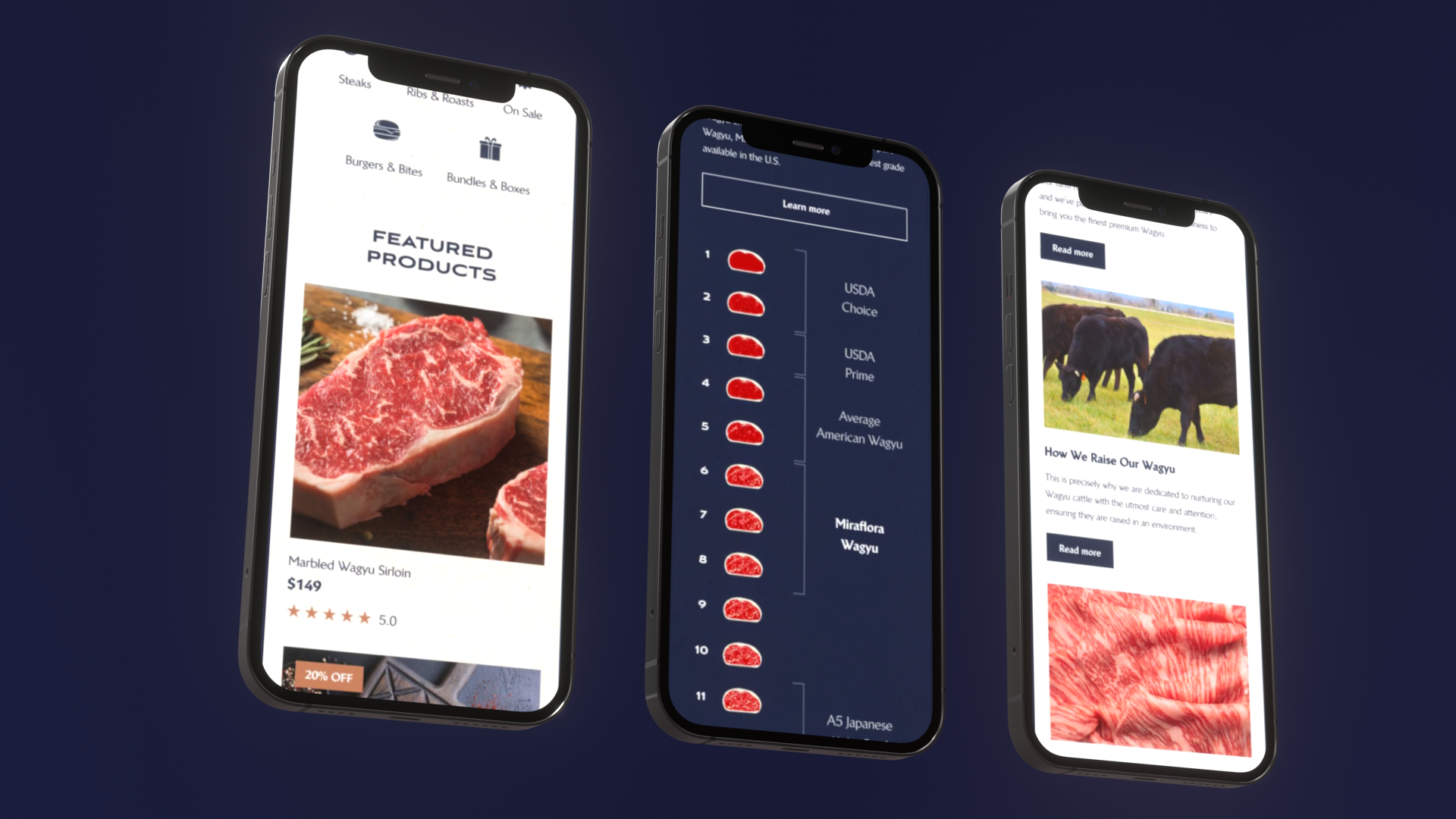

Example: On Miraflora Wagyu, a luxury Shopify build delivered in 4 weeks, the constraint was time zones and async feedback. The way to keep momentum was tight demo checkpoints and clear acceptance criteria. Different product, same lesson: demo cadence beats long spec docs.

The MVP in weeks scope template

Use this as your MVP scope template. Keep it to one page.

1) ICP and job to be done

- ICP: [who]

- Job: [what they are trying to accomplish]

- Current workaround: [tool, spreadsheet, agency]

2) Primary workflow

- Start state: [user has X]

- Success state: [user achieves Y]

- Success event: [named event]

3) MVP features (must ship)

- Feature 1: [why it enables workflow]

- Feature 2

- Feature 3

4) Non goals (explicitly out)

- Not in MVP: [billing automation, multi region, etc.]

5) Instrumentation (must ship)

- Events: [list]

- Dashboard: [what it shows]

6) Demo plan

- Demo v1 date: [date]

- Demo script: [link]

7) Risks and tests

- Risk 1: [test]

- Risk 2: [test]

8) Budget guardrails

- Cap: [number]

- Tradeoffs you accept: [list]

If this template feels too strict, that’s a sign you’re still exploring. That’s normal. Just don’t call it an investor ready MVP yet.

Backlog examples by product type

These are starter backlogs that tend to fit a 4 to 12 week MVP window. Adjust based on your domain and risk.

SaaS MVP backlog (example)

- Auth, org creation

- Create core object

- Core workflow action

- Invite teammate

- Basic roles (admin, member)

- Activity log for core actions

- Stripe checkout or manual invoicing placeholder

- Analytics events and a simple dashboard

Marketplace MVP backlog (example)

- Supply side onboarding (create listing)

- Demand side search and view listing

- Booking request and confirmation

- Messaging or structured questions

- Trust basics: identity check or phone verification

- Dispute and cancellation policy text

- Analytics: listing created, booking requested, booking completed

AI MVP backlog (example)

- One task flow (ask, retrieve, answer)

- RAG sources and citations

- Human feedback capture (thumbs up, thumbs down, reason)

- Eval dataset v1

- Quality dashboard (task success, citation rate)

- Guardrails: refusal patterns, PII redaction if needed

- Cost controls: caching, rate limits

Insight: For AI, “works in the demo” is table stakes. What matters is whether it stays correct and safe after 100 varied inputs.

Conclusion

A fundable MVP is a narrow production slice with a demo that maps to a real workflow and a baseline you can measure.

If you want to scope an investor ready MVP that investors will fund, keep it simple and defensible:

- Define whether you need a PoC, prototype, or MVP and don’t blur them

- Pick one user, one workflow, one outcome

- Cut features until the MVP fits a weeks long window

- Instrument activation and outcomes from day one

- Plan the roadmap demo first, then build backward

Final check: If you can’t explain your MVP scope in 60 seconds, you will not control the investor conversation. The scope will control you.

Next steps you can do this week:

- Fill the MVP scope template in one page.

- Write the investor demo script outline and time it.

- Create the first analytics dashboard, even if it’s sparse.

- Cut your backlog to 20 to 40 tickets and assign owners.

The goal is not perfection. The goal is momentum you can prove.

Cost drivers and tradeoffs

MVP cost is not a single number. It’s a function of risk, scope, and speed.

Main cost range drivers:

- Team shape: senior only teams cost more per week, but usually ship with fewer rewrites

- Domain constraints: regulated industries add compliance by design work

- Data complexity: migrations, messy inputs, and integrations add uncertainty

- AI quality requirements: evals, guardrails, and monitoring are real work

- Polish level: custom UI and animation can double design time

Common tradeoffs that keep budgets sane:

- Start with responsive web, not native apps

- Manual ops behind the scenes instead of full automation

- One integration max in MVP

- One persona and minimal roles

If you need a number for planning, treat it as a hypothesis and validate it by breaking work into tickets and estimating in days. That estimate is what you defend to investors.