Introduction

Procurement wants speed. Security wants certainty. Legal wants clean terms. Engineering wants to ship.

Vendor due diligence is where those goals collide. Especially with software and AI vendors, where the biggest risks are rarely the ones in the sales deck. They live in identity flows, audit gaps, subcontractors, and what happens at 2 a.m. when something breaks.

This guide is a practical, enterprise-grade vendor due diligence checklist you can run in regulated environments like finance, healthcare, and defense. It is written for the consulting engineering leader who needs to align stakeholders, move fast, and still be able to defend the decision in an audit.

Insight: The goal is not to eliminate risk. The goal is to make risk explicit, assign owners, and price it into the relationship.

You will get:

- A software vendor due diligence checklist you can reuse

- An AI vendor security questionnaire mapping approach that keeps answers comparable

- ISO and SOC 2 reality checks with specific evidence requests

- GDPR vendor assessment essentials, including DPA terms that matter

- Incident response expectations and SLA language that does not fall apart under pressure

When this checklist matters most

Use this when any of the following are true:

- The vendor will touch regulated data (PII, PHI, card data)

- The vendor will be embedded in production workflows (auth, payments, clinical operations)

- The vendor is an AI provider or uses subprocessors for model hosting

- You need procurement, security, and legal to sign off within weeks, not quarters

In our delivery work, the fastest programs are the ones where due diligence runs like engineering. Clear scope. Evidence over statements. Short feedback loops. When we built a virtual event platform at ExpoDubai scale, the technical work was only half the risk story. The other half was operational readiness: access, logs, incident playbooks, and who answers when something goes wrong.

_> Proof points from delivery

What we track when shipping production systems

Due diligence workflow

_> A fast path that still holds up in an audit

→ Scroll to see all steps

Scope the assessment fast

Most vendor reviews fail before the first questionnaire. The scope is fuzzy, so every stakeholder assumes worst case.

Start with a one page intake. Then pick the depth of review based on data and blast radius.

Common failure modes

- You assess the vendor, but not their subprocessors

- You ask 300 questions, then never validate the answers

- You treat ISO or SOC 2 as a pass, not a starting point

- You skip operational checks like backups and restore tests

Key Stat: A large share of enterprise security findings are operational, not cryptographic. If you do not ask about logging, access reviews, and incident drills, you will miss the issues that actually hurt.

Triage framework

Use a simple risk tiering model. It keeps procurement moving and makes security reviews defendable.

| Tier | Data exposure | System criticality | Typical review depth | Decision owner |

|---|---|---|---|---|

| 1 | Public or synthetic | Non critical | Lightweight questionnaire | Procurement |

| 2 | Internal business data | Important but not core | Full questionnaire plus evidence sampling | Security and Engineering |

| 3 | PII, PHI, PCI scope, secrets | Mission critical | Full questionnaire, evidence validation, contract hardening, ongoing monitoring | Security, Legal, Risk |

What to capture in intake

- Data types: PII, PHI, payment, credentials, model prompts

- Data flow: where data enters, where it is stored, where it leaves

- Hosting: cloud region, single tenant vs multi tenant

- Integration: SSO, SCIM, API keys, network paths

- Subprocessors: list, purpose, and data touched

- Go live timeline and rollback plan

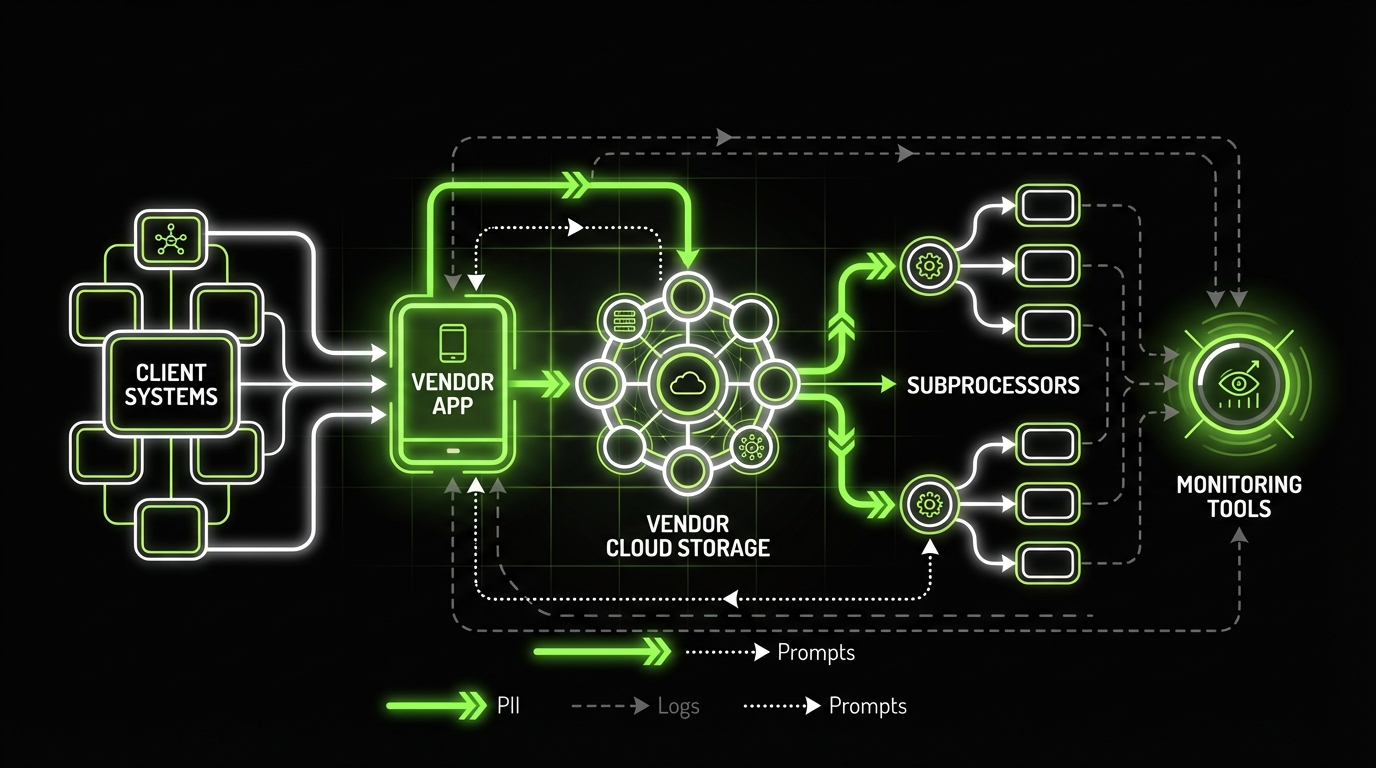

AI specific scoping questions

AI changes the scope in subtle ways. Ask these early so you do not redo the review later.

- Are prompts and outputs stored? For how long?

- Is customer data used for training or fine tuning?

- Which model providers are involved (first party vs third party APIs)?

- What is the retrieval layer (vector database), and what is indexed?

- Can you enforce tenant isolation for embeddings and prompt logs?

If the vendor cannot answer these clearly, treat them as Tier 3 until proven otherwise.

One page intake template

Copy into your procurement ticketUse this as the first gate before any questionnaire.

- Vendor name, product, and business owner

- Tier (1 to 3) with justification

- Data types processed (PII, PHI, PCI, credentials, prompts)

- Data regions and hosting model

- Integrations (SSO, SCIM, APIs, webhooks)

- Subprocessors list link

- Required go live date and rollback plan

- Contract artifacts needed (MSA, DPA, SOC 2, pen test)

Definition of done:

- Tier assigned

- Data flow documented

- Evidence requests sent

- Legal clause deltas agreed

Security questionnaire mapping

Questionnaires are necessary. They are also noisy. The trick is mapping.

Instead of accepting a vendor specific PDF, map their answers to your control framework so you can compare vendors and track gaps over time.

Map to controls, not questions

Pick a control baseline that matches your org. Common options:

- ISO 27001 Annex A

- SOC 2 Trust Services Criteria

- NIST 800 53 or NIST CSF

- CIS Controls

Then build a crosswalk so each vendor answer lands in a control bucket.

Insight: A good mapping turns a 200 question document into a 20 control conversation with clear owners.

What to ask and what good answers look like

Below is a practical set of prompts you can reuse for a software vendor due diligence checklist and an AI vendor security questionnaire.

Identity and access

- Ask: Do you support SSO (SAML or OIDC)? Do you support SCIM? Can we enforce MFA for all accounts?

- Good answer: SSO supported, SCIM supported, MFA enforced, break glass accounts controlled, admin actions logged.

Encryption and key management

- Ask: Encryption at rest and in transit? Who manages keys? Any customer managed keys?

- Good answer: TLS 1.2+ in transit, strong at rest encryption, clear KMS ownership, documented rotation.

Logging and monitoring

- Ask: What security logs exist? Can customers export them? Log retention?

- Good answer: Admin and auth logs available, export via API, retention configurable, tamper resistance.

Vulnerability management

- Ask: Patch SLAs? Dependency scanning? How do you handle critical CVEs?

- Good answer: Defined SLAs by severity, scanning in CI, emergency patch process, evidence of recent fixes.

AI data handling

- Ask: Are prompts, outputs, and embeddings treated as customer data? Is training opt in?

- Good answer: Training is opt in, retention is limited, deletion supported, clear subprocessors.

Red flags you can act on

- “We are working on SOC 2” with no timeline or scope

- “We encrypt everything” with no cipher details, no key ownership clarity

- No incident response runbooks, only a generic policy

- No way to export logs, or logs are “available upon request”

- Subprocessors list is incomplete or kept private

Practical scoring tip: Score each control 0 to 2. 0 = missing or unclear 1 = present but weak evidence 2 = strong evidence plus operational maturity Total score is less important than the Tier 3 gaps.How we keep reviews comparable

In delivery work, we often see teams collect questionnaires that nobody can compare. A simple normalization helps:

- Store answers in a structured format (spreadsheet or ticketed controls)

- Require every answer to include an artifact link

- Track gaps as backlog items with owners and due dates

If you do only one thing, do this: map every answer to a control, and require evidence for every Tier 3 control.

Evidence artifacts checklist

_> What to request and why it matters

SOC 2 scope and period

Confirms what was audited and when. Prevents false confidence from out of scope systems.

Access review records

Shows access is managed in practice, not just in policy. Look for dates, approvers, and exceptions.

Log export sample

Validates you can investigate incidents. Ask for a redacted example and retention settings.

Pen test remediation proof

A report without fixes is noise. Ask for retest results or ticket evidence.

Backup restore test log

Backups are not recovery. Restore tests show RTO and RPO are plausible.

Subprocessor list and regions

Required for GDPR vendor assessment and transfer risk. Must be current and specific.

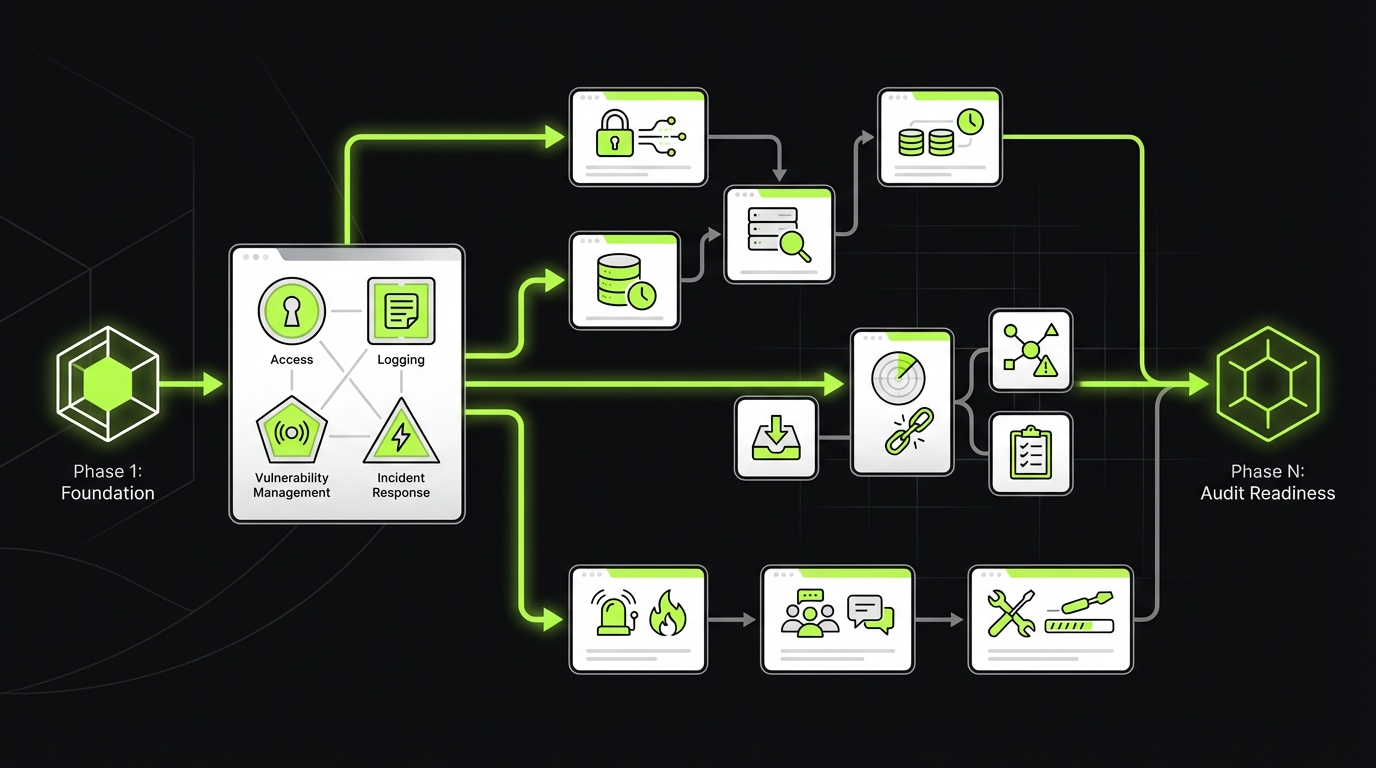

ISO and SOC 2 reality checks

ISO certificates and SOC 2 reports are useful. They are not proof that your specific risks are covered.

ISO and SOC 2 Reality

Evidence, not badgesWhat works: ISO certificates and SOC 2 reports are useful as an entry point. They can speed up Tier 1 and some Tier 2 decisions. What fails: Treating them as proof that your risks are covered. A clean report can still miss your data flow, your regions, your integration model, or your subprocessor chain. Mitigation: Request evidence that ties to your scope. Examples:

- Which product and environment the report covers (not “the company”)

- Exceptions and management responses (what was not fixed)

- Control evidence for your high risk areas (SSO enforcement, log export, restore tests, incident runbooks)

What to measure (hypothesis): number of Tier 3 gaps discovered after “SOC 2 provided”, and time to close them with contract clauses or technical changes.

Treat them as entry points. Then request targeted evidence.

SOC 2: what to request

- SOC 2 Type II report, not Type I

- Report period that includes the last 6 to 12 months

- System description and boundaries (what is in scope)

- Complementary user entity controls (what you must do)

- Bridge letter if the report is old

Good sign: the vendor can explain scope limitations without getting defensive.

Bad sign: they refuse to share anything beyond a marketing one pager.

Insight: The fastest way to de risk a SOC 2 review is to read the scope first. Many teams skip it and argue over controls that were never audited.

ISO 27001: what to validate

- Certificate issuer and validity dates

- Statement of Applicability (SoA)

- Which sites and systems are covered

- How they handle exceptions and risk acceptance

Evidence artifacts list

Ask for artifacts that match the controls you care about. Sample list:

- Security policies: access control, encryption, secure SDLC, vendor management

- Operational evidence: access review records, change management tickets, backup and restore test logs

- Technical evidence: vulnerability scans, pen test report and remediation proof, WAF or firewall configs

- Audit evidence: SOC 2 report, ISO certificate, internal audit schedule

- AI evidence: retention settings for prompts, training opt in docs, model and subprocessor list

Pen tests: what counts as useful

A pen test PDF is not automatically evidence.

Ask:

- Was it done by an independent firm?

- What was in scope (API, mobile, infra, social engineering)?

- Were findings fixed, and can they show retest results?

- Do they run ongoing scanning between annual tests?

Good answer: they provide an executive summary plus remediation tracking.

Weak answer: they provide a generic letter with no scope or findings.

Evidence request email

Short, specific, hard to dodgeSubject: Security and privacy evidence request Please share links or attachments for the following:

- SOC 2 Type II report (last 12 months) and bridge letter if applicable

- ISO 27001 certificate and Statement of Applicability

- Most recent independent pen test summary plus remediation status

- Subprocessor list and data regions

- Incident response plan and support severity matrix

- Log export options and retention defaults

- Data retention and deletion procedure for customer data

If any item is not available, please state why and propose an alternative artifact.

GDPR and DPA essentials

A GDPR vendor assessment is not about memorizing articles. It is about ensuring the vendor can operate as a processor without creating legal and operational chaos.

Map Answers to Controls

Comparable, repeatable reviewsProblem: Vendor PDFs are noisy. You cannot compare Vendor A to Vendor B, and you cannot track gaps over time. Fix: Map answers into your control baseline (ISO 27001, SOC 2 TSC, NIST, CIS). Turn 200 questions into a smaller set of control buckets with owners. Use a simple scoring model:

- 0 = missing or unclear

- 1 = present, weak evidence

- 2 = strong evidence plus operational maturity

Focus areas that actually fail in practice:

- Identity: SSO (SAML or OIDC), SCIM, MFA enforcement, admin logs

- Logging: export via API, retention, tamper resistance

- AI data handling: prompts and embeddings treated as customer data, training opt in, deletion supported

Red flags you can act on: “We encrypt everything” (no key ownership), logs “upon request”, SOC 2 “in progress” with no scope or date.

DPA terms that matter

Focus on terms that change your risk profile:

- Roles and instructions: processor acts only on documented instructions

- Subprocessors: list, notice period, and objection rights

- International transfers: SCCs and transfer impact approach

- Security measures: concrete measures, not “industry standard”

- Deletion and return: timelines, formats, and verification

- Audit rights: practical audit mechanism, not unlimited onsite access

Key Stat: If you cannot name the subprocessors and data regions, you cannot credibly answer “where does our data go?” in an audit.

Sample MSA and DPA clauses to request

These are examples to discuss with legal. They are written to be specific and testable.

Subprocessor notice Vendor will provide at least 30 days prior notice before adding or replacing subprocessors that process Customer Data. Customer may object on reasonable data protection grounds. If unresolved, Customer may terminate the affected services without penalty. Security incident notification Vendor will notify Customer without undue delay and in any event within 24 hours after confirming a Security Incident affecting Customer Data. Notification will include known scope, impacted systems, containment actions, and next update time. Deletion Upon termination, Vendor will delete Customer Data within 30 days unless retention is required by law. Vendor will provide written confirmation of deletion upon request. Audit mechanism Once per year, Customer may request a security and privacy evidence package that includes SOC 2 report, pen test summary, and last access review evidence. If material gaps are identified, Customer may request a focused remote audit.What “good” looks like in practice

- DPA references a real technical architecture, not a template

- Subprocessor list is public and updated

- Data residency is explicit

- Deletion is actually tested, and they can show how

What fails in practice

- The vendor offers a DPA but cannot explain retention or deletion

- They rely on “legitimate interest” language where consent or contract is required

- They cannot support data subject requests within your timelines

If you are in healthcare or payment flows, map GDPR terms to HIPAA BAAs or PCI DSS responsibilities. Different regimes, same pattern: clear roles, clear evidence, and no hidden data flows.

GDPR meets AI

AI vendors often treat prompts as logs. That is where risk hides.

Ask directly:

- Are prompts and outputs considered personal data in your system?

- Can we disable storage of prompts and outputs?

- Can we delete by user, tenant, and time range?

- Do you use our data to improve models? Is it opt in?

If they cannot offer clear retention controls, assume your data will be retained longer than you want.

What this checklist buys you

_> Outcomes procurement, security, and engineering can agree on

Faster approvals

Clear tiering and standardized evidence requests reduce back and forth and unblock procurement.

Fewer surprises in audit

You can point to artifacts, scope, and contract terms instead of relying on vendor statements.

Cleaner incident handling

Defined notification timelines, update cadence, and log access reduce time to containment.

Comparable vendor choices

Control mapping turns questionnaires into a consistent scorecard across vendors.

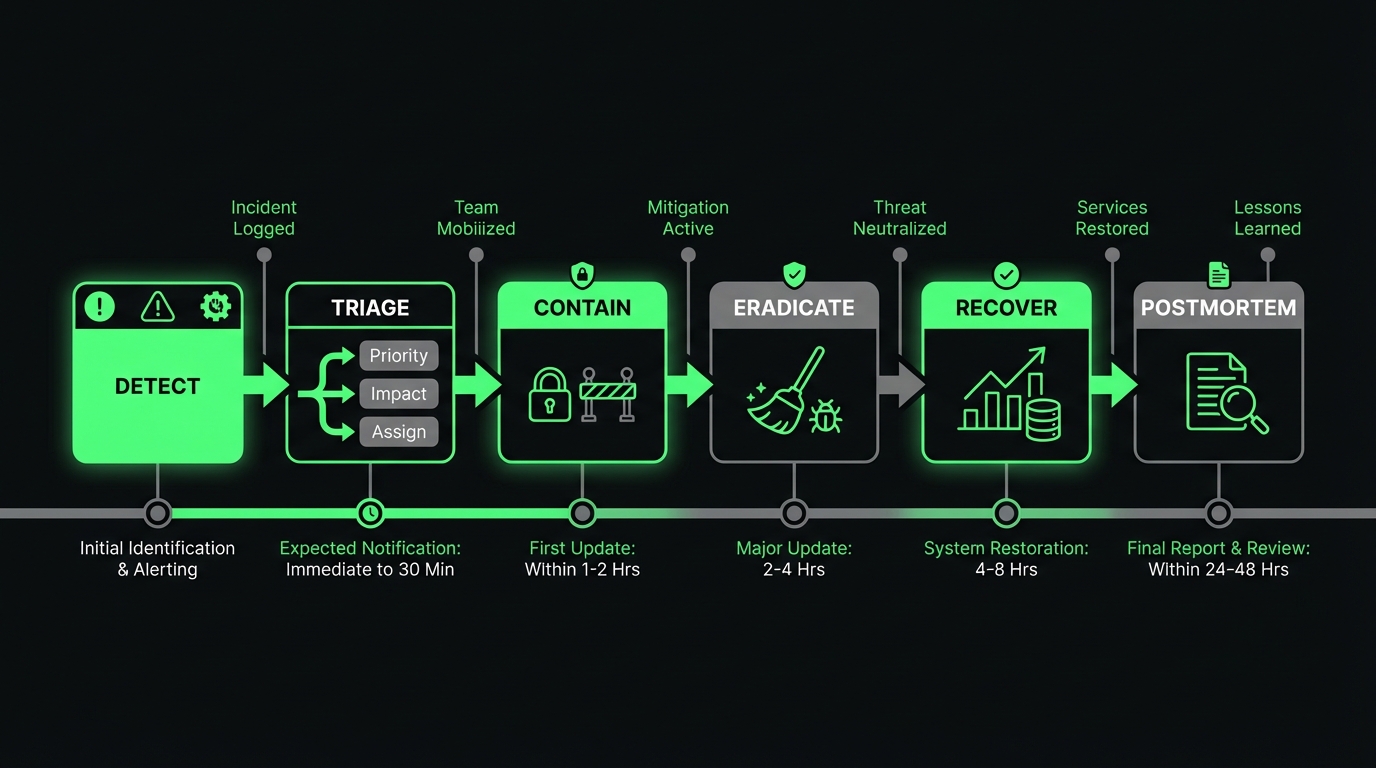

Incident response expectations and SLAs

Most contracts talk about uptime. Few talk about response quality.

Scope Before Questionnaires

One page intake + tieringWhy it matters: Most reviews fail because the scope is fuzzy. Everyone assumes worst case, the questionnaire explodes, and nothing gets validated. Do this first (15 to 30 minutes):

- Capture data types (PII, PHI, PCI, credentials, prompts), data flow, hosting region, integration path (SSO, SCIM, API keys), and subprocessors.

- Assign a risk tier (1 to 3) based on data exposure and blast radius. Tie it to a decision owner so it does not stall.

Common misses (and the fix):

- You assess the vendor but not subcontractors → require a subprocessor list with purpose and data touched.

- You skip restore tests and incident drills → ask for the last backup restore result and last incident exercise date.

What to measure (hypothesis): cycle time from intake to decision, and % of findings that are operational (logging, access reviews, drills) vs technical.

That is a problem. In regulated industries, the question is not “will an incident happen?” It is “how fast will we know, and what will we get?”

Incident response checklist

Request the vendor’s incident response plan. Then validate it with specific questions.

- How do you classify severity (SEV1 to SEV4)?

- Who is on call, and what is the escalation path?

- Do you run incident drills? How often?

- Do you have a post incident review template?

- Can you share redacted examples of prior incident reports?

Example: In large scale platforms like virtual events, the difference between a rough launch and a controlled launch is often observability and escalation clarity, not just infrastructure size.

SLA and SLO terms that hold up

Use measurable commitments. Avoid vague language like “commercially reasonable.”

| Area | Ask for | What good looks like |

|---|---|---|

| Availability | Monthly uptime and exclusions | Clear definition of downtime and maintenance windows |

| Support | Response time by severity | SEV1 response in minutes, not days |

| Incident updates | Update cadence | First update within 60 minutes, then hourly |

| RPO and RTO | Recovery targets | Targets that match your business process |

| Credits | Service credits | Credits that scale with impact |

Minimal incident clause set

- Notification timeline (24 hours after confirmation is common for serious events)

- Update cadence and communication channels

- Root cause analysis delivery timeline (for example, 5 to 10 business days)

- Cooperation obligations (logs, forensics, customer notifications)

What to measure after go live

Due diligence is not a one time event. Set a small set of operational metrics.

- Mean time to acknowledge support tickets

- Mean time to resolve SEV1 and SEV2

- Patch latency for critical CVEs

- Access review completion rate

- Backup restore test pass rate

If you lack baseline data, treat these as hypotheses and measure them in the first 90 days.

Conclusion

Vendor due diligence is engineering work with legal consequences. If you keep it evidence based, you can move fast without gambling.

Here is a simple way to run it:

- Scope the vendor by data and blast radius.

- Map the questionnaire to controls.

- Validate ISO and SOC 2 with targeted evidence.

- Lock down GDPR and DPA terms that change your risk.

- Put incident response and SLAs in writing.

- Measure performance after go live.

Insight: The best outcome is not a perfect vendor. It is a contract and operating model that makes problems visible early.

Actionable next steps you can do this week:

- Create a Tier 1 to 3 intake form and require it for every purchase

- Add an evidence column to your AI vendor security questionnaire

- Standardize your DPA clause requests for subprocessors, retention, and incident notice

- Ask every vendor for one redacted incident report and one restore test record

If you do those four, your next audit conversation gets easier. Your next incident gets smaller.