Introduction

You need to ship. You also need to stay secure, keep latency down, and avoid a hiring plan that collapses under budget review.

That is the real tension behind build vs buy software decisions. It is rarely about ideology. It is about speed to market, total cost of ownership, and risk you can actually carry.

This playbook gives you practical models you can run in a spreadsheet:

- TCO math for hiring vs contractors vs agencies

- Onboarding time and delivery velocity modeling

- A risk model for external execution

- Quality gates and acceptance criteria you can enforce

- An exit plan and handover strategy that does not trap you

Proof point: Across 360+ delivered projects (including regulated and enterprise contexts), the pattern is consistent: the best outcomes happen when scope, quality gates, and ownership boundaries are explicit from day one.

A simple definition set

Let’s align terms. Most teams use these interchangeably, and that creates bad decisions.

- Build: your employees deliver and run it. You own delivery management, hiring, and ops.

- Buy: you adopt a product (SaaS, platform, component). You configure and integrate.

- Partner: an external team ships with you. You still own the product, roadmap, and long term outcomes.

Outsourcing vs in house engineering is not binary. Many CTOs run a hybrid: internal core, partner for bursts, and buy for non differentiating capabilities.

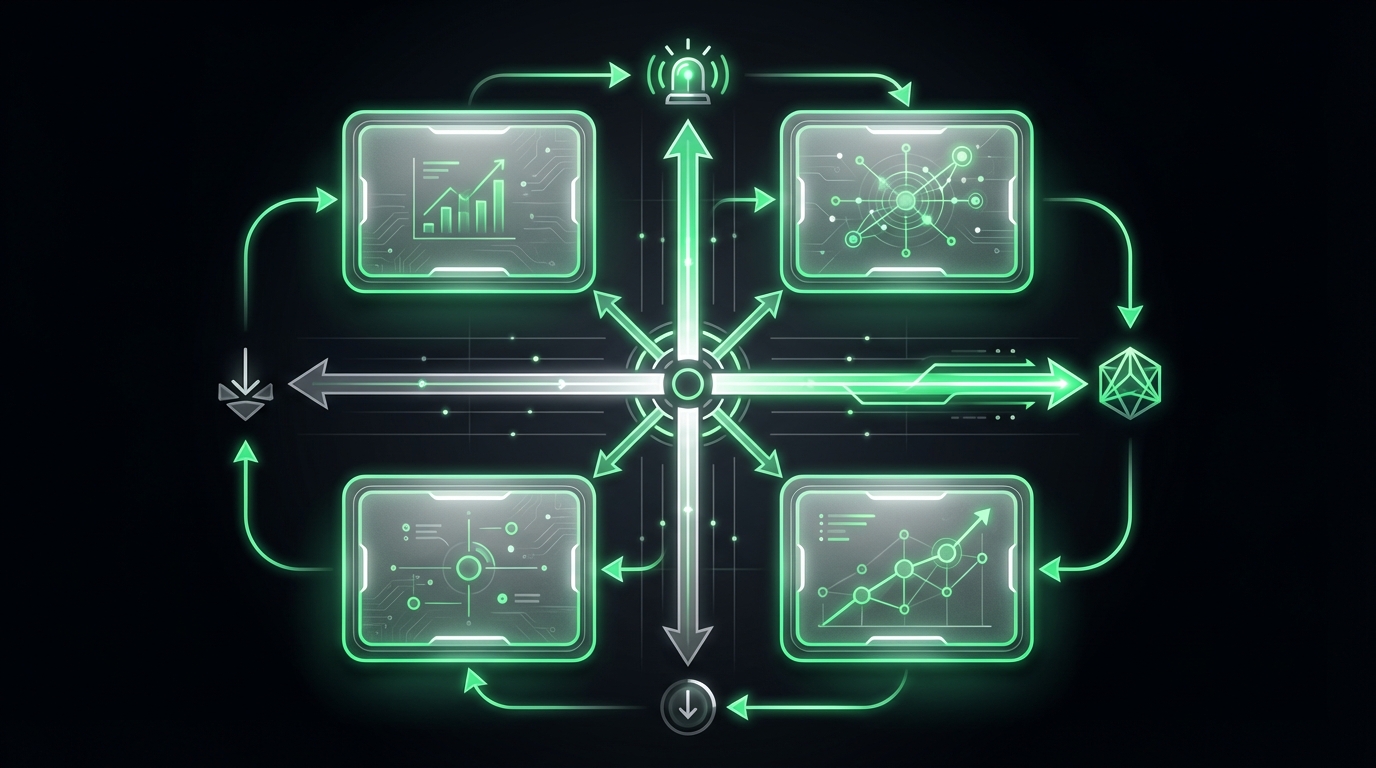

_> Delivery realities

Numbers that shape planning and expectations

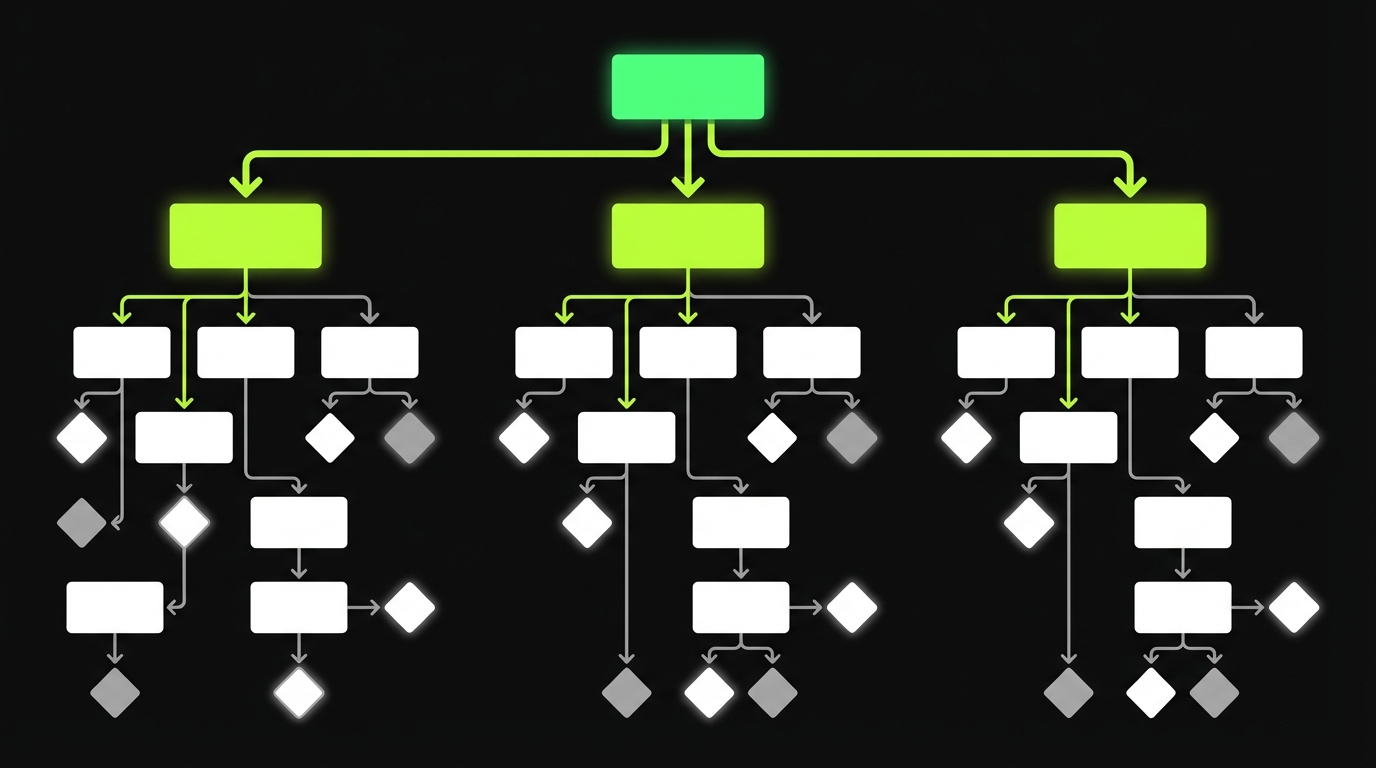

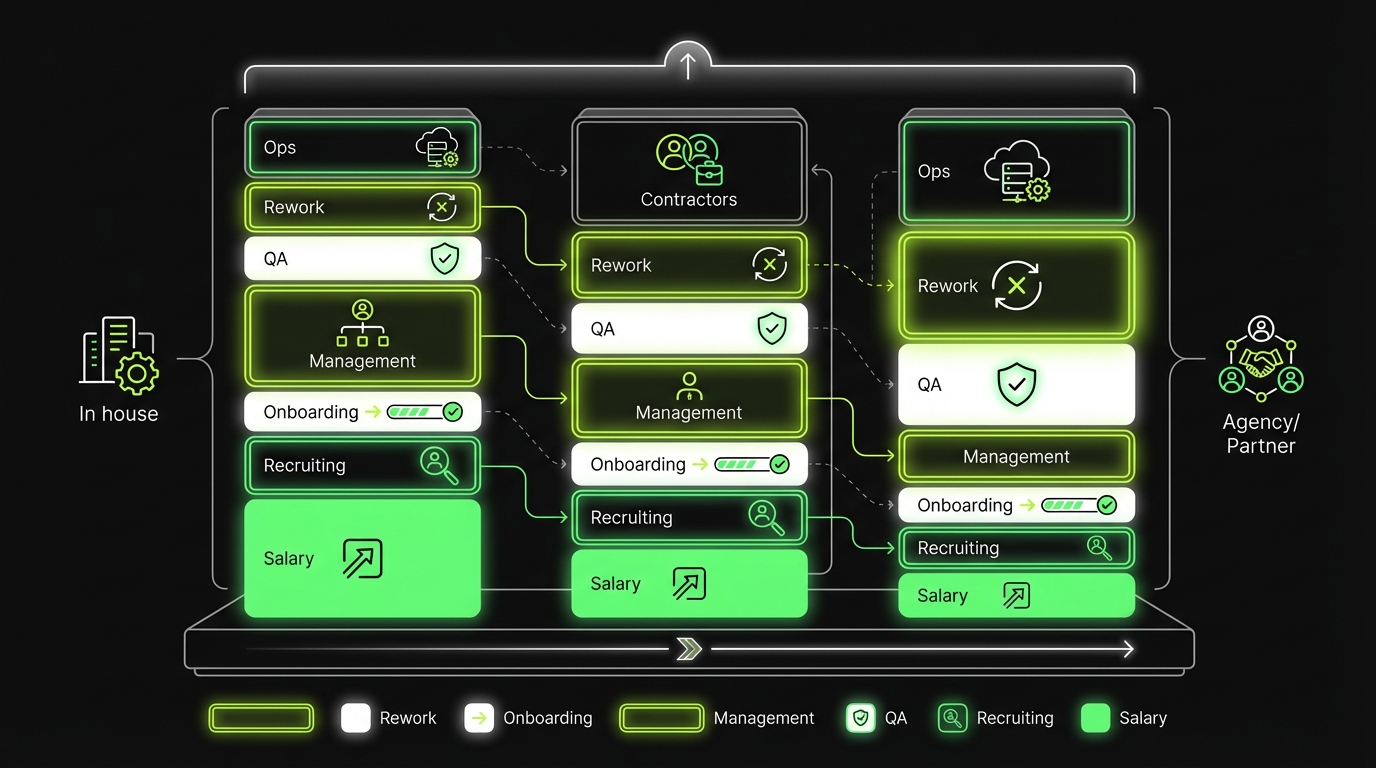

Build vs buy vs partner process

_> A repeatable flow for CTO decision making

→ Scroll to see all steps

Choose the right path

Start with constraints, not preferences. The decision is usually obvious once you quantify what matters.

Here are the most common drivers:

- Differentiation: does this capability win deals or reduce churn?

- Compliance by design: do you need audit trails, data residency, SOC 2, HIPAA, or strict access controls?

- Performance: are you solving latency, throughput, or cost per request at scale?

- Roadmap volatility: will requirements change weekly based on user feedback?

- Internal capacity: do you have staff who can own architecture and code reviews?

Insight: Teams get burned when they treat “buy” as zero engineering. Integration, data migration, security review, and change management often cost more than the first year of licenses.

Quick comparison table

| Dimension | Build | Buy | Partner |

|---|---|---|---|

| Speed to first release | Medium | Fast | Fast |

| Custom fit | High | Medium | High |

| Long term flexibility | High | Low to Medium | High |

| Upfront cost | High | Low to Medium | Medium |

| Ongoing cost predictability | Medium | High | Medium |

| Compliance control | High | Medium | High |

| Talent dependency | High | Low | Medium |

When each option wins

- Build wins when the feature is differentiating, long lived, and you can staff it with seniors who will stay.

- Buy wins when the capability is commodity and the vendor’s constraints are acceptable.

- Partner wins when time matters, hiring is slow, or you need specialist execution (for example, AI feature delivery with dedicated QA gates).

A practical hybrid pattern

A common pattern that holds up:

- Internal team owns architecture, security posture, and critical paths.

- Partner team owns delivery throughput on defined epics.

- You buy commodity tooling (auth provider, analytics, billing) and integrate.

This keeps core knowledge in house while avoiding the cost to hire dev team vs agency debate turning into a religious war.

What good partnering looks like

_> Force multiplier patterns that keep ownership in house

Faster time to first release

A self directed senior team can ship a thin slice quickly when scope and gates are explicit.

Predictable throughput

Velocity becomes measurable when work lands in your repos under your CI and release cadence is fixed.

Reduced hiring pressure

You can delay permanent headcount decisions until you have real usage data and a stable roadmap.

Better risk control

Security, quality, and exit terms are enforceable when they are written as acceptance criteria and handover milestones.

Total cost of ownership

TCO is where most build vs buy software conversations get real. Not because CTOs love spreadsheets. Because budgets are finite.

You need to model fully loaded cost, not salary. And you need to include the hidden work: onboarding, management overhead, rework, and operational load.

TCO: hiring vs contractors vs agencies

Key cost buckets to include:

- Compensation and taxes

- Recruiting fees and time cost (your engineers interviewing)

- Equipment, tools, and cloud environments

- Engineering management and product overhead

- QA and release management

- Security and compliance work

- Attrition and backfill

Key stat: If you do not model onboarding and management overhead, your plan will look 20% to 40% cheaper than it will feel in execution. Treat that range as a hypothesis and validate it by tracking time spent in interviews, onboarding, and rework.

ROI calculator logic and inputs

Use this as spreadsheet logic. Keep it boring. Boring ships.

Inputs:

- Scope size: estimated story points or person weeks for v1

- Target launch date: weeks until first usable release

- Internal capacity: available engineer weeks per sprint (after support and meetings)

- Ramp time: weeks to reach expected throughput

- Cost per FTE month: fully loaded

- External rate: blended rate for contractor or agency

- Defect cost: expected rework percentage

- Opportunity value: revenue pulled forward or churn reduced per month

Core formulas:

- Delivery time (weeks) = Scope / (Velocity per week)

- Velocity per week = (Team size) x (Productive hours per week) x (Efficiency factor)

- Total cost = (Internal cost x duration) + (External cost x duration) + one time onboarding

- ROI (simple) = (Opportunity value x months pulled forward) - Total cost

A minimal pseudo formula you can paste into a doc:

months_pulled_forward = max(0, (internal_delivery_months - chosen_option_delivery_months)) roi = (opportunity_value_per_month * months_pulled_forward) - total_costCost to hire dev team vs agency: what to compare

Do not compare a single salary to an agency invoice. Compare a production unit.

A typical production unit for product delivery includes:

- 1 Tech lead or staff engineer

- 1 to 3 engineers

- 0.5 QA (or shared QA function)

- 0.25 to 0.5 product or delivery management

If you cannot staff that unit internally, you will pay for it in delays and defects.

TCO traps that show up at scale

Watch these when you have performance and compliance constraints:

- Security review debt: external code lands, but no one budgets for threat modeling, pen tests, or access reviews.

- Platform cost drift: rushed architecture increases cloud spend. Track cost per active user or cost per request.

- Bus factor risk: one senior holds the system in their head. That is not a staffing plan.

Mitigation is simple but not easy: define ownership boundaries, set quality gates, and enforce documentation as part of “done”.

Onboarding time and velocity

The fastest team is rarely the largest. It is the team that reaches predictable throughput quickly.

ROI spreadsheet baseline

Boring math, useful decisionsRun the same inputs across build, buy, and partner: scope size, target date, internal capacity, ramp time, cost per FTE month, external rate, defect cost, and opportunity value. Use one simple comparison: months pulled forward.

months_pulled_forward = max(0, (internal_delivery_months - chosen_option_delivery_months)) roi = (opportunity_value_per_month * months_pulled_forward) - total_costFailure mode: comparing one salary to an agency invoice. Compare a production unit (tech lead, 1 to 3 engineers, partial QA, partial delivery management) or your timeline will slip.

You can model onboarding time with simple math. The goal is not precision. The goal is avoiding fantasy timelines.

Onboarding time math

Break onboarding into phases:

- Access and environments: accounts, repos, CI, secrets, staging

- Architecture orientation: domains, data flows, critical paths

- Delivery alignment: backlog, definition of done, release process

- First production change: the real start of learning

A rough model:

- Week 1: 20% throughput

- Week 2: 50% throughput

- Week 3 to 4: 70% to 90% throughput

If you have heavy compliance requirements, add time for:

- secure laptop and MDM

- least privilege access

- audit logging

- data handling training

Insight: Your onboarding bottleneck is usually not code. It is access, unclear ownership, and missing test environments.

Delivery velocity modeling

Use a conservative throughput model:

- Productive hours per engineer per week: 20 to 28 (meetings, reviews, support are real)

- Efficiency factor: 0.6 to 0.8 depending on domain maturity

- Rework factor: add 10% to 30% unless you have strong quality gates

A quick checklist to make velocity real:

- Define what counts as “shipped” (production, monitored, documented)

- Track cycle time from “in progress” to “released”

- Track escaped defects per release

- Track performance budgets (p95 latency, error rate)

30 60 90 day ramp plan

You want a ramp plan for internal hires and for external execution. Same principle. Different levers.

- Days 0 to 30: ship a thin slice to production. Build trust. Establish CI, environments, and quality gates.

- Days 31 to 60: increase scope. Reduce cycle time. Add observability and performance budgets.

- Days 61 to 90: harden. Add compliance controls. Plan the handover and reduce key person risk.

This is where outsourcing vs in house engineering becomes measurable. If an option cannot ship a thin slice in 30 days, it will not magically accelerate later.

A concrete ramp example

In our experience building a real time conversational AI avatar with a brand experience agency, the delivery constraint was latency and “feels natural” interaction. The timeline was four weeks.

What made it possible was not heroics. It was focus:

- narrow scope for v1

- strict performance targets

- fast feedback loops

Example: A four week timeline is achievable when you treat the first release as a controlled experiment with explicit acceptance criteria, not a full product.

Vendor interview script

Questions that surface risk fastUse these in the first call:

- Show me a recent architecture decision you reversed. Why?

- What are your non negotiable quality gates?

- How do you handle security reviews and least privilege access?

- Where does code live and who owns CI?

- What is your plan for handover if we stop in 60 days?

If answers are vague, you have your answer.

External execution risk

Partnering can compress timelines. It can also create a new class of risk. Model it explicitly.

Model TCO honestly

Count hidden workUse fully loaded cost, not salary. Include recruiting time, onboarding, management overhead, QA, security, rework, and ops. Key check: if you skip onboarding and management overhead, plans often look 20% to 40% cheaper than execution. Treat that range as a hypothesis. Measure interview hours, onboarding time to first merged PR, and rework rate per sprint.

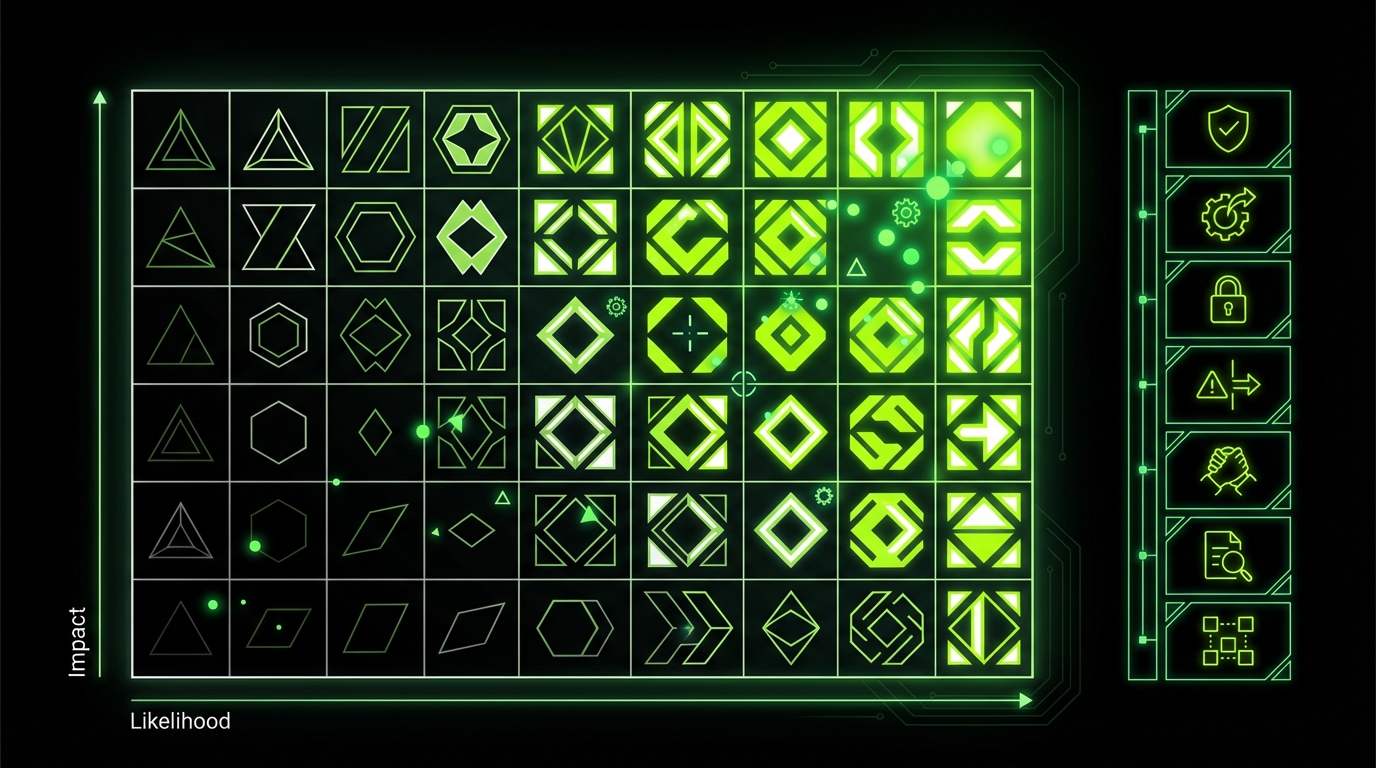

Risk model for external execution

Score each risk 1 to 5 for likelihood and impact. Multiply for a risk score.

Common risks:

- Domain misunderstanding: wrong assumptions, wrong abstractions

- Security posture mismatch: weak access controls, missing audit trails

- IP and licensing issues: unclear ownership, dependencies with restrictive licenses

- Hidden subcontracting: unknown engineers touching your code

- Delivery opacity: progress reports without working software

- Quality debt: shipping without tests, observability, or performance budgets

Mitigations you can enforce:

- Require a working increment every sprint

- Require code to land in your repos under your CI

- Enforce least privilege access and audit logging

- Make architecture decisions explicit and reviewed

Key stat: If you cannot see code, tests, and deployment history in your own systems, you are not managing risk. You are hoping.

Vendor red flags checklist

Treat these as stop signs or at least renegotiation triggers:

- They avoid naming who will do the work

- They cannot explain their QA approach beyond “we test it”

- They push for long fixed scope before discovery

- They refuse to work in your repos or under your CI

- They do not ask about compliance, data classification, or threat models

- They promise exact dates without showing assumptions

- They treat documentation as “nice to have”

Evaluation scorecard template

Use a simple weighted scorecard. Keep it visible.

| Criteria | Weight | Score 1 to 5 | Notes |

|---|---|---|---|

| Seniority and stability of team | 20 | ||

| Security and compliance by design | 20 | ||

| Delivery transparency and cadence | 15 | ||

| Quality gates and test strategy | 15 | ||

| Architecture and scalability chops | 15 | ||

| Communication and time zones | 10 | ||

| Exit plan and handover | 5 |

Add one rule: if security scores under 4, it is a no.

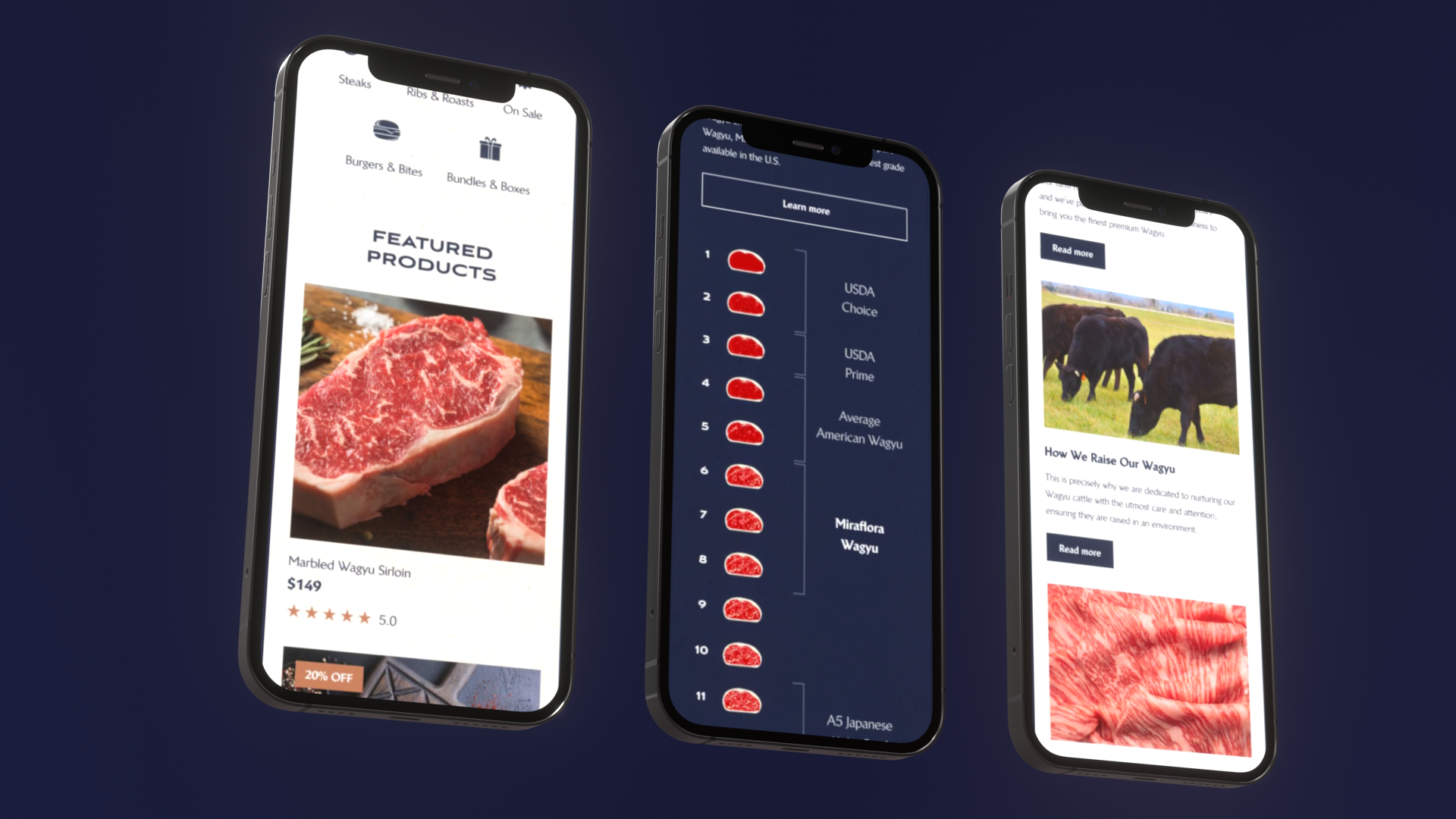

A real delivery constraint example

When we delivered a custom Shopify experience for Miraflora Wagyu in four weeks, the risk was not “can we build Shopify pages.” It was coordination across time zones and fast feedback.

The mitigation was process:

- async first communication

- short decision loops

- clear acceptance criteria for each weekly milestone

That is the kind of risk you should surface early. Not after the timeline slips.

Definition of done starter

Production ready, not demo readyMinimum bar:

- Tests for critical paths

- Dependency scanning and secrets hygiene

- Observability in place (logs, metrics, alerts)

- Performance budgets agreed and measured

- Runbook written for top 5 failure modes

If any item is “later,” write down when and who owns it.

Quality gates that prevent rework

_> What to require regardless of delivery model

Security checks in CI

SAST and dependency scanning with fail conditions. Secrets never stored in code or tickets.

Performance budgets

Define p95 latency and error rate targets per critical flow. Alert on regressions.

Observability by default

Dashboards and alerts ship with features. On call has runbooks, not tribal knowledge.

AI specific evaluation

If you ship LLM features, maintain test datasets, regression checks, and drift monitoring.

Release hygiene

Feature flags, staged rollouts, and clear rollback steps. Every release has notes and owners.

Acceptance criteria

Ticket level criteria that product and engineering can both sign off. No “looks good to me” releases.

Quality gates and exit plans

Most delivery failures are not caused by bad intent. They come from vague definitions of “done” and no plan for what happens after launch.

Pick a path fast

Start with constraintsDecide on differentiation, compliance, performance, roadmap volatility, and internal capacity. If a capability wins deals or reduces churn, build (or partner with strict ownership boundaries). If it is commodity, buy, but budget for integration work. Failure mode: treating “buy” as zero engineering. You still pay for data migration, security review, and change management. Track these hours in the first 4 to 6 weeks to validate the assumption.

Quality gates and acceptance criteria

Make quality gates part of the contract and part of sprint acceptance.

Minimum acceptance criteria for production ready work:

- Functional: meets user story, edge cases covered

- Testing: unit and integration tests for critical paths

- Security: threat model for sensitive flows, secrets handling, access reviews

- Performance: latency and error budgets defined and measured

- Observability: logs, metrics, traces, dashboards, alerts

- Documentation: runbooks for on call and common incidents

A simple “definition of done” checklist:

- Code merged into main under your CI

- Tests green, coverage meets agreed floor

- Security checks passed (SAST, dependency scan)

- Deployed to staging with release notes

- Product sign off using acceptance criteria

- Deployed to production with monitoring

Insight: If QA is only manual at the end, you will pay for it twice. Once in delays. Once in incidents.

Exit plan and handover strategy

Assume you will change the delivery model later. Plan for it now.

Exit plan components:

- Repo ownership: code in your org, not theirs

- Access: remove vendor access within 24 hours when needed

- Documentation: architecture decision records, runbooks, onboarding docs

- Knowledge transfer: recorded walkthroughs, pairing sessions, Q and A backlog

- Operational handover: alert ownership, incident process, SLOs

A clean handover timeline:

- Week 1: documentation baseline and system map

- Week 2: shadow on call and incident drills

- Week 3: internal team runs releases, partner supports

- Week 4: partner steps back, only advisory

What to do with AI features

If your product includes LLM or ML behavior, add AI specific gates:

- evaluation datasets for correctness and safety

- prompt and retrieval regression tests

- drift monitoring and alerting

- latency budgets for model calls

This mirrors what we do in AI QA delivery: treat AI behavior as a product inside the product. Test it like one.

Example: For AI features, two runs can differ even with the same input. Your acceptance criteria must include variance, not just “it worked once.”

Acceptance criteria you can paste into tickets

Use this format to reduce ambiguity:

- Given [context], when [action], then [expected outcome]

- Performance: p95 under [target] for [endpoint or flow]

- Security: data classified as [level], stored in [location], access limited to [roles]

- Observability: dashboard link, alert thresholds, runbook link

It looks strict. It saves you later.

Conclusion

Build vs buy software is a delivery strategy decision. Not a philosophy.

If you want one rule: choose the option that gets you to a monitored production release fastest, with a clear path to ownership.

A practical next step checklist:

- Run the TCO model with fully loaded costs and onboarding time

- Model delivery velocity with conservative productive hours

- Use a risk scorecard for external execution

- Write acceptance criteria and quality gates before sprint 1

- Put an exit plan in writing, including repo ownership and handover milestones

Final insight: The best CTOs do not avoid tradeoffs. They make them explicit, measure them, and revisit them when the data changes.