Introduction

Delivery speed usually dies from a thousand paper cuts. Every team solves the same problems. CI pipelines drift. Security reviews happen late. On call learns the system during an incident.

Platform engineering is the pragmatic response: build an internal developer platform that makes the right thing the easy thing. Not by forcing one stack. By offering platform engineering golden path workflows that teams can adopt in hours, then extend.

This article is written for engineering leaders who need faster delivery without trading away reliability, compliance, or hiring sanity.

You will get:

- A clear definition of golden paths and what they include

- How to do policy as code without turning into the platform police

- How to standardize paved road CI/CD while keeping self directed teams

- How to ship observability by default so MTTR actually drops

- A requirements matrix, rollout plan, scorecard, and anti patterns

Insight: A platform is not the goal. Measured outcomes are the goal. If lead time and MTTR do not move, you built an internal product that devs do not trust.

What we mean by golden paths

A golden path is a paved road for a common workflow. It is opinionated enough to be fast, but flexible enough to not trap teams.

A useful golden path typically includes:

- A service template or scaffolder

- A paved road CI/CD pipeline

- Policy as code checks with compliance ready defaults

- Standard telemetry: logs, traces, metrics

- A runbook baseline and on call hooks

The key is scope. Pick the workflows that happen every week, not the ones that happen once per quarter.

_> Proof points we use in planning

Benchmarks and delivery context that keep platform work grounded

Why delivery slows at scale

Most orgs do not slow down because engineers got worse. They slow down because the system around engineering becomes inconsistent.

Common failure modes we see when teams grow:

- Pipeline sprawl: every repo has a different CI, different caching, different secrets handling

- Security late in the cycle: findings arrive after the feature is “done”

- On call roulette: the first time you learn the service is during an incident

- Tool fatigue: five ways to deploy, three ways to add config, zero ways to know which is correct

- Hiring drag: onboarding takes weeks because tribal knowledge is the interface

Key Stat: DORA metrics are still the simplest executive language for delivery: lead time, deployment frequency, change failure rate, and MTTR. If you cannot measure them per team and per service, platform work becomes a vibes project.

The CTO constraint set

Platform engineering only sticks when it respects real constraints:

- Budget: platform headcount must pay for itself in reduced toil and fewer incidents

- Roadmap pressure: teams will route around anything that slows shipping

- Compliance: you need auditability without adding ticket queues

- Talent: senior engineers want autonomy, not a new gatekeeper

A good internal developer platform treats teams like customers. It earns adoption.

Internal developer platform requirements matrix

Use this to scope your first releaseScore each row 0 to 2. If your total is under 18, do not build a portal yet. Fix basics first.

| Area | Requirement | 0 | 1 | 2 |

|---|---|---|---|---|

| Golden paths | 1 to 2 paved workflows exist | None | Template only | Template plus deploy plus runbook |

| CI speed | PR feedback loop | >30 min | 15 to 30 min | <15 min |

| Policy as code | Automated baseline controls | Manual | Partial | CI plus deploy enforcement |

| Observability | Logs, traces, metrics | Ad hoc | Partial | Standard libraries and dashboards |

| Ownership | Clear service ownership | Unknown | Documented | On call and escalation wired |

| Self service | Day 1 needs | Tickets | Some self serve | Most actions self serve |

| Documentation | Getting started | Outdated | OK | Updated with examples |

| Platform SLO | Platform reliability | None | Informal | Defined and tracked |

Quick tip: keep the matrix visible. It prevents platform scope creep.

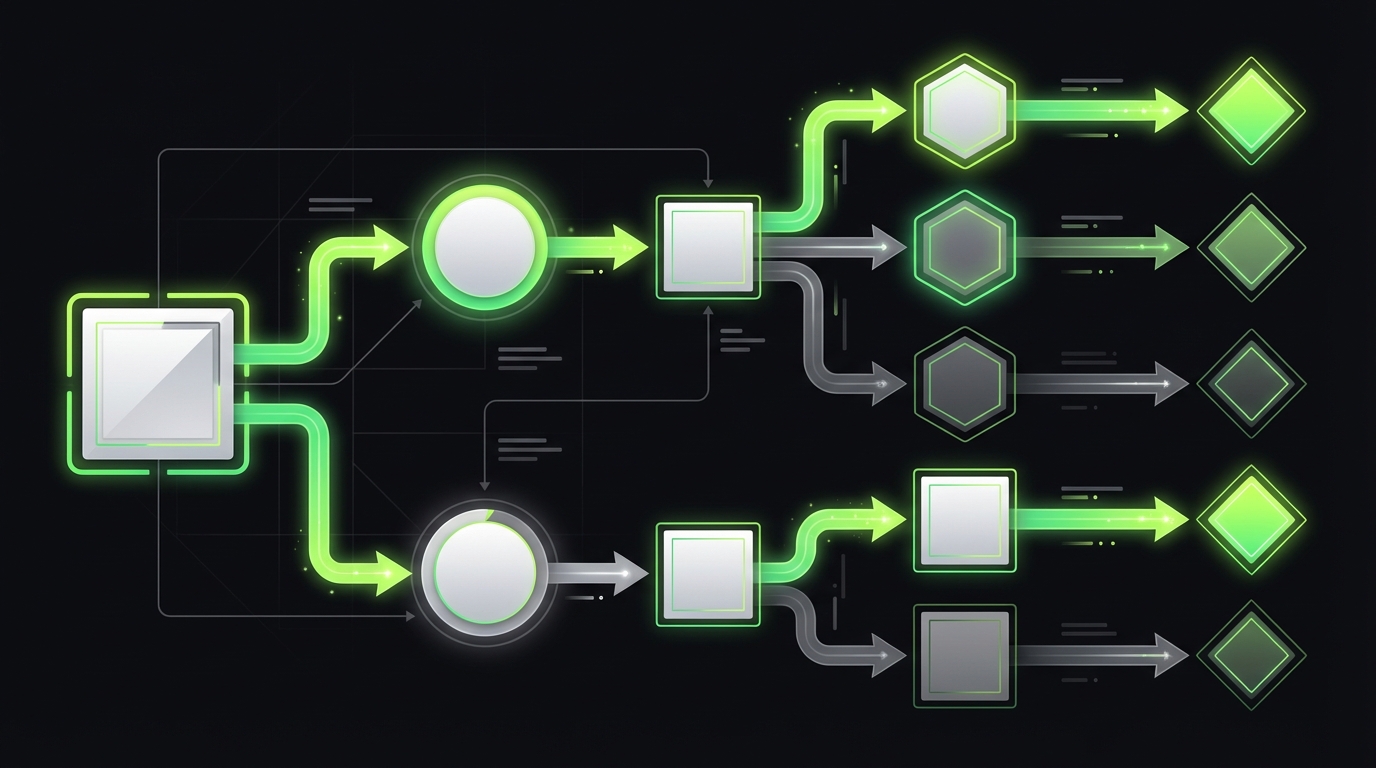

Golden path building blocks

_> What to standardize first

Service scaffolding

Generate a production ready repo with consistent structure, dependency rules, and local dev commands.

Paved road CI/CD templates

Shared pipelines with caching, security scans, preview deploys, and promotion rules.

Policy as code checks

Automated enforcement for security baselines, IaC rules, and software supply chain controls.

Observability by default

Standard libraries for logs, traces, and metrics plus dashboards and alert routing.

Ownership metadata

Service catalog entries, on call rotation, and escalation paths wired into incident tooling.

Escape hatches

Documented ways to diverge with explicit tradeoffs, exceptions, and support boundaries.

Golden paths that teams actually use

Golden paths work when they remove decisions that do not matter and make the important decisions explicit.

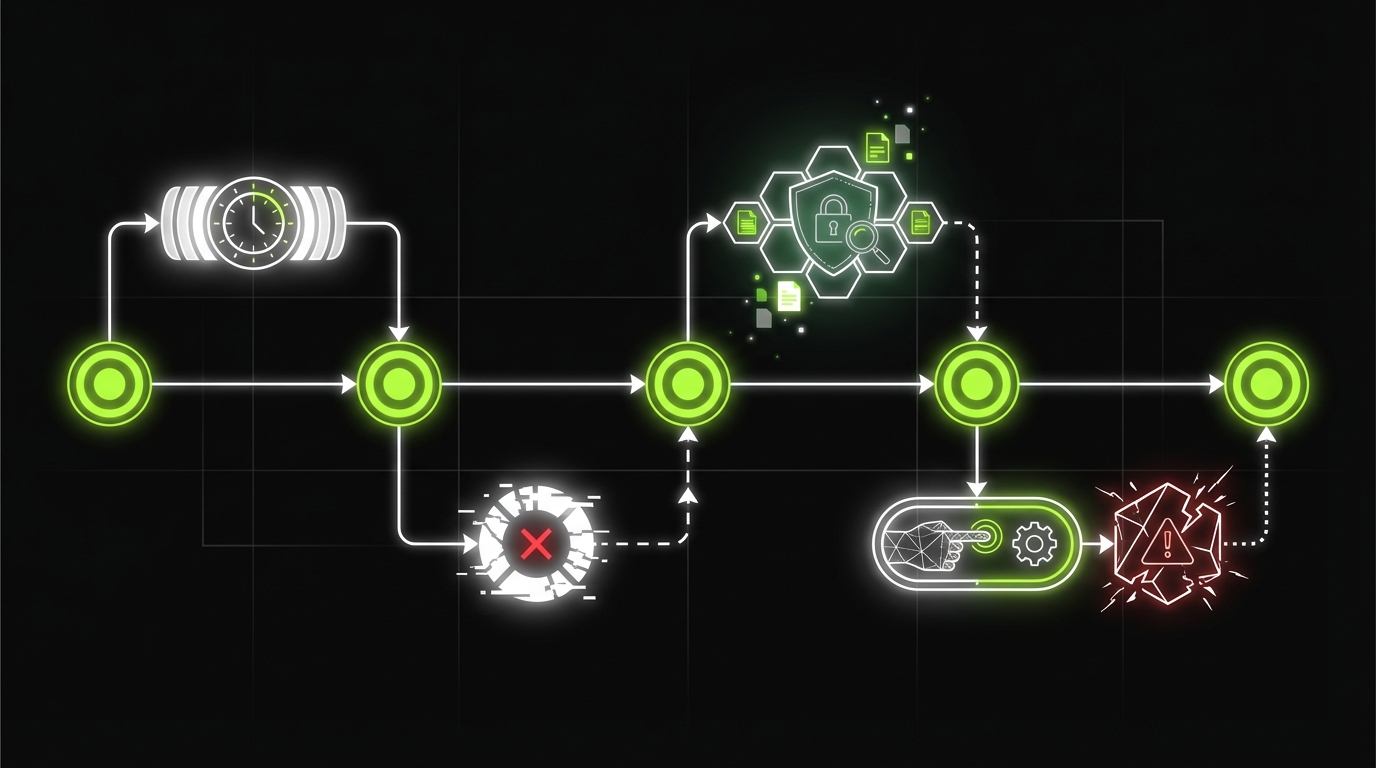

Paved road CI choices

Standardize without lock inStandardization is not centralization. Pick a CI approach based on drift risk and coupling, then measure whether it actually improves delivery. Decision guide:

- Copy paste per repo: fast now, high drift. Works for small orgs or short lived repos.

- Shared templates: default for most teams. Keeps flexibility while reducing snowflakes.

- Central pipeline service: lowest drift, highest coupling. Fits heavily regulated orgs.

Hard metric: if common CI runs exceed 10 to 15 minutes, engineers batch changes. Lead time goes up even if deployment frequency looks fine. Track p50 and p95 CI duration, cache hit rate, and change failure rate before and after standardizing.

A practical platform engineering golden path starts with 1 to 2 workflows:

- “Create a new backend service”

- “Add a new async worker”

- “Ship a frontend app”

Each path should be:

- Fast: first deploy in under a day for an existing team

- Safe: policy checks are automatic and explainable

- Observable: telemetry is there before the first incident

- Extensible: escape hatches exist, but they cost a little effort

Insight: If the golden path does not include deploy and on call basics, it is not a path. It is a starter repo.

What goes into a golden path

Use this checklist to define a path that can survive production:

- Scaffolding

- Repo structure, linting, formatting

- Dependency policy (pinned versions, allowed registries)

- Local dev: one command to run

- Paved road CI/CD

- Build, test, security scan, containerize

- Environment promotion rules

- Standard rollback strategy

- Policy as code

- Minimum TLS, secrets handling, SBOM, image signing

- IaC rules: public buckets, open security groups

- Observability by default

- Structured logs, tracing, metrics, dashboards

- SLOs and alert routing

- Operational baseline

- Runbook template

- Ownership metadata, pager rotation, escalation path

A small detail that matters: include a “why” link for every default. Engineers accept constraints faster when the reason is visible.

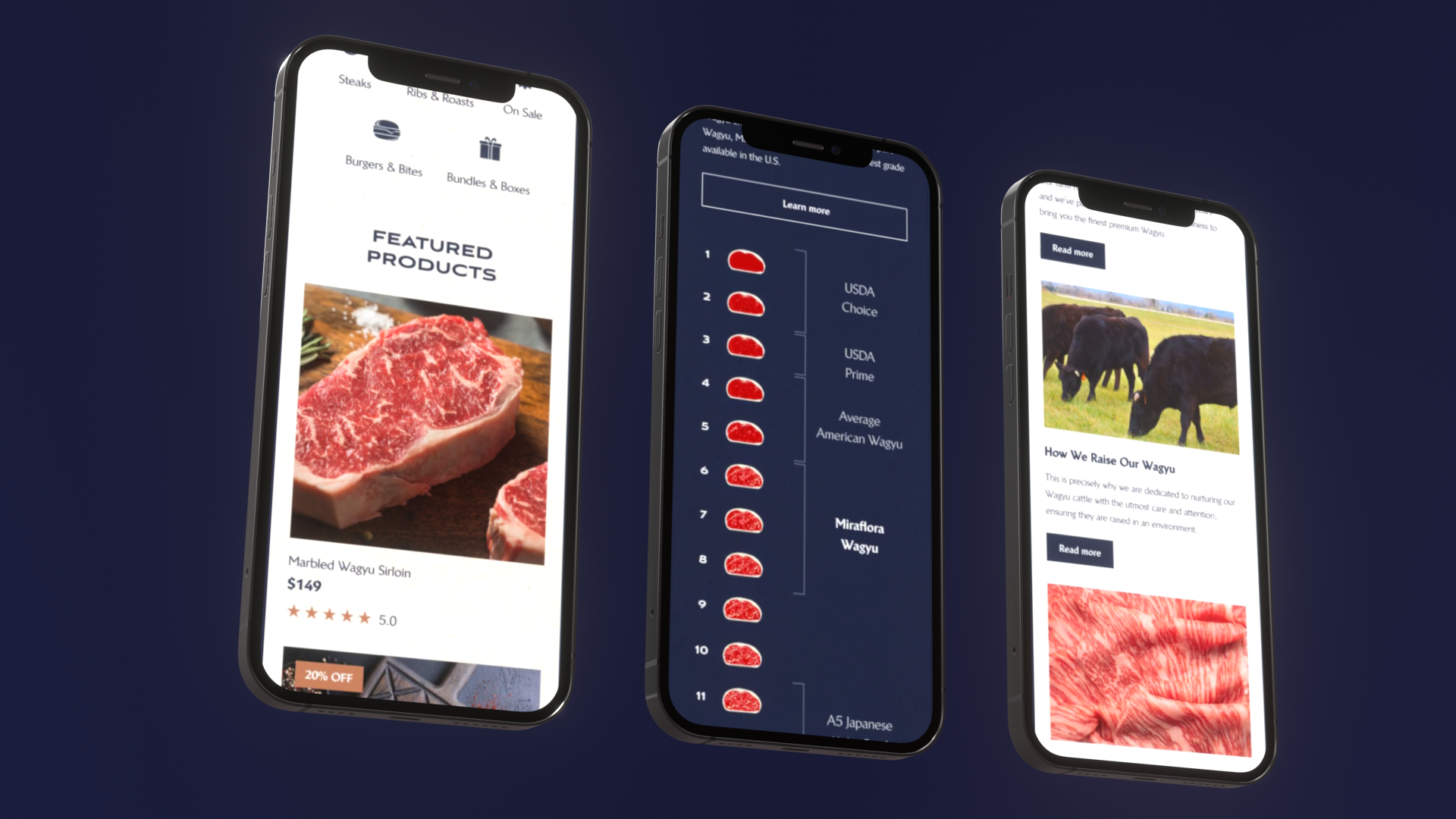

Example: shipping fast with a tight timeline

In delivery work, tight timelines expose whether your workflows are real.

For example, when we delivered a custom Shopify experience for Miraflora Wagyu in 4 weeks, the only way to move that quickly was to reduce coordination overhead. The team was spread across time zones from Hawaii to Germany, so asynchronous work had to be the default.

That is the same pressure you see inside a scaling org. Golden paths reduce the need for synchronous “how do we do X here?” conversations. The platform becomes the shared context.

Fast defaults

Golden paths

First deploy in hours, not weeks. Templates include CI, telemetry, and ownership metadata.

Compliance by design

Policy as code

Automated checks with clear failures and exception workflows. Auditability without ticket queues.

Team autonomy

Paved road CI/CD

Shared pipeline templates with safe customization points. Standard where it matters, flexible where it counts.

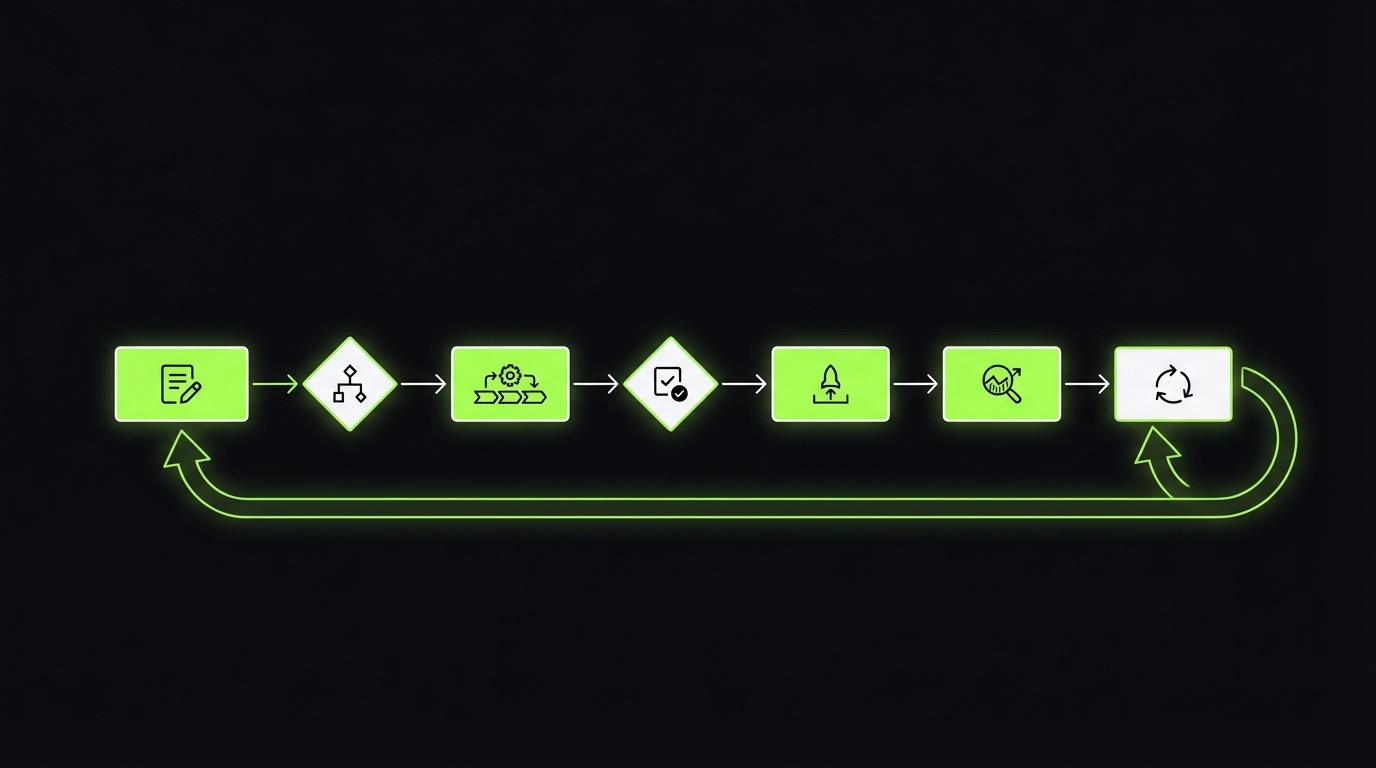

Platform rollout steps

_> A sequence that minimizes risk and politics

→ Scroll to see all steps

Compliance by design with policy as code

Security and compliance cannot be a separate lane. If you want faster delivery, you need compliance by design.

Policy as code basics

Default on, tiered rulesTreat compliance as part of the delivery path. Run checks in CI and at deploy time, keep policies versioned, and apply stricter rules to higher risk systems. Make failures actionable:

- Return a clear message (what to change, where).

- Prefer auto fixes when possible (scaffolder defaults or a bot PR). Hypothesis: if you can auto fix 80% of violations, review load drops and lead time improves.

What fails: policy becomes “platform police” when it only blocks. Mitigation: publish a baseline policy set, document exceptions, and track metrics like policy failure rate, time to remediate, and number of approved waivers.

Policy as code works when it is:

- Default on: checks run in CI and at deploy time

- Actionable: failures explain what to change

- Versioned: policies evolve with the platform

- Tiered: stricter rules for higher risk systems

Insight: The fastest compliance program is the one where engineers rarely talk to compliance because the defaults already satisfy the baseline.

Here is a minimal example using Open Policy Agent style rules for Kubernetes. Keep it boring and auditable.

package kubernetes.admission

deny[msg] {

input.request.kind.kind == "Deployment"

container := input.request.object.spec.template.spec.containers[_]

not container.resources.limits.cpu

msg := sprintf("container %s must set cpu limits", [container.name])

}

deny[msg] {

input.request.kind.kind == "Deployment"

container := input.request.object.spec.template.spec.containers[_]

container.securityContext.runAsNonRoot != true

msg := sprintf("container %s must run as non root", [container.name])

}

Subtle point: policy as code is not only about blocking. It is also about auto fixing. If 80% of violations can be fixed by the scaffolder or a bot PR, do that.

Compliance ready defaults that reduce work

Defaults that usually pay back quickly:

- Signed container images and provenance

- SBOM generation on every build

- Secret scanning and blocked commits

- Encrypted storage by default

- Network policies and least privilege service accounts

- Dependency allow list for regulated environments

If you operate in multiple regulatory contexts, define tiers:

- Tier 0: internal tools

- Tier 1: customer facing

- Tier 2: regulated or payments

Then map golden paths to tiers. Do not ask teams to guess.

What to do when policy blocks shipping

Blocking is sometimes necessary. But if it is your only tool, teams will route around it.

Mitigations that work:

- Provide an exception workflow with a time bound approval

- Log every exception and review monthly

- Add a platform backlog item for the top recurring exception

- Publish policy change notes like you would for an API

This keeps governance strict without creating a ticket queue culture.

Rollout plan

Start with 1 to 2 golden pathsA rollout that works in real orgs looks like this:

- Pick two pilot teams with different needs.

- Build one golden path end to end.

- Get first deploy done with both teams.

- Fix the top 5 papercuts before adding features.

- Add the second golden path.

- Publish adoption docs and office hours.

- Scale via templates and versioned modules.

Rules of thumb:

- If onboarding takes more than one hour, adoption will stall.

- If teams cannot extend the path, they will fork it.

- If you cannot measure outcomes, you cannot justify headcount.

Hypothesis you can validate: every 1 minute you cut from average CI time reduces lead time by more than 1 minute because it reduces batching and context switching.

What improves when the platform is working

Shorter lead time

Teams spend less time on setup, pipeline debugging, and manual release steps.

Lower MTTR

Incidents are diagnosable because logs, traces, and metrics exist from day one.

Reduced change failure rate

Policy checks and standardized deploy patterns catch risky changes earlier.

Faster onboarding

New hires ship sooner because the platform is the interface, not tribal knowledge.

More predictable compliance

Audit evidence is produced automatically through CI and deployment events.

Less platform politics

Golden paths earn adoption by saving time, not by forcing standards through mandates.

Paved road CI/CD without slowing teams

Standardization is not the same as centralization.

Golden path definition

Adopt in hours, not weeksStart with 1 to 2 workflows (for example: new backend service, async worker, frontend app). A usable golden path includes deploy + on call basics, not just a starter repo. Checklist:

- Fast: first deploy in under a day for an existing team (measure lead time from scaffold to prod).

- Safe: policy checks run automatically and explain failures.

- Observable: logs, metrics, traces, and alerts exist before the first incident.

- Extensible: allow escape hatches, but make them slightly more work so defaults stay the norm.

Failure mode to watch: teams copy the template once, then drift. Mitigation: keep the path versioned and make upgrades routine (bot PRs or scheduled updates).

A good paved road CI/CD setup gives teams:

- A default pipeline that is fast and reliable

- Safe customization points

- Shared build cache and artifact strategy

- Consistent release semantics across services

The goal is fewer unique snowflakes, not one pipeline to rule them all.

Here is a simple comparison you can use when deciding how hard to standardize:

| Approach | Speed to adopt | Flexibility | Risk | When it fits |

|---|---|---|---|---|

| Copy paste pipeline per repo | Fast now | High | High drift | Small org, short lived repos |

| Shared pipeline templates | Medium | Medium | Medium | Most teams, most stacks |

| Central pipeline service | Slow | Low to medium | Low drift, high coupling | Heavily regulated orgs |

Key Stat: If CI takes longer than 10 to 15 minutes for common changes, engineers start batching work. Lead time climbs even if deployment frequency looks fine.

Subsections below focus on keeping speed while adding consistency.

Standard pipeline stages that matter

Keep the default pipeline short and predictable:

- Lint and unit tests

- Build and package

- Security scans (SAST, dependency, container)

- Integration tests for changed modules

- Publish artifact

- Deploy to preview

- Promote to staging and production

Then add the “boring but important” parts:

- Deterministic builds

- Cache strategy

- Secret injection pattern

- Rollback and canary support

How to avoid blocking teams

Three patterns that work in practice:

- Golden path as the easiest path: teams can diverge, but it takes effort

- Guardrails, not gates: warn first, block only for high risk policies

- Platform as a library: publish pipeline and infra modules as versioned packages

If you run the platform as a product, you will also run it like a product:

- Release notes

- Deprecation windows

- Backward compatible changes by default

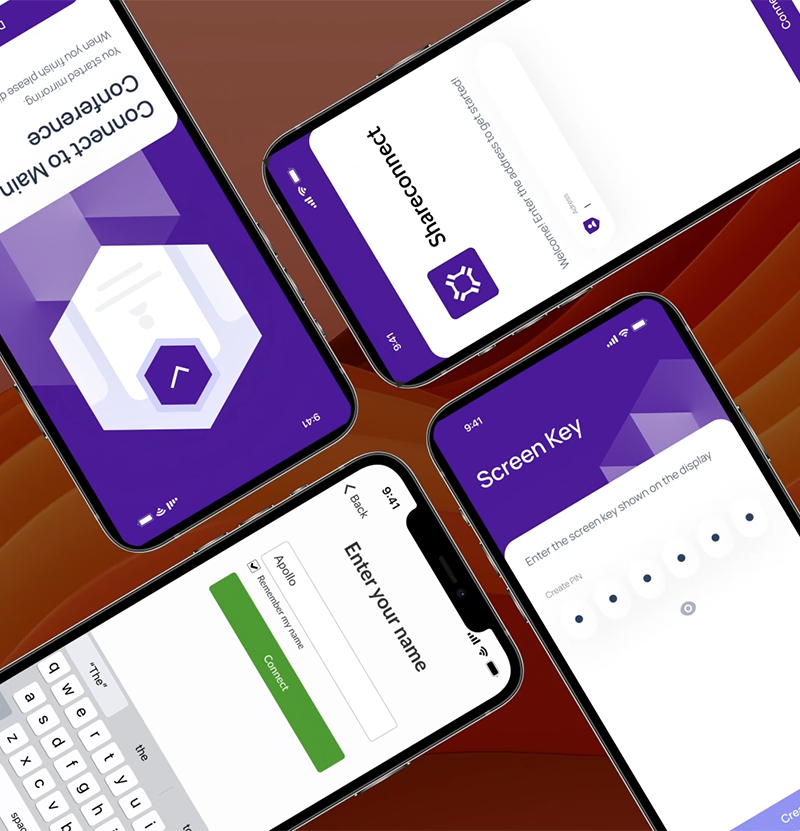

Example: moving fast across platforms

In the screen capturing mobile app revamp we delivered in 2 months, the tricky part was not UI polish. It was navigating strict OS permissions and store constraints across Android and iOS.

That kind of work benefits from standard CI steps and checks. You want repeatable signing, predictable build outputs, and clear release rules. Not because it is fancy, but because it reduces “it worked on my machine” failures that burn calendar time.

Executive scorecard

Platform impact metrics that finance acceptsAvoid vanity metrics like number of templates or portal logins. Use metrics that connect to risk and cost.

| Metric | Definition | Why execs care | How to measure |

|---|---|---|---|

| Lead time | Commit to production | Faster revenue and learning | Git plus deploy events |

| MTTR | Detect to restore | Lower outage cost | Incident timestamps |

| Change failure rate | Deploys causing incidents | Reliability and brand risk | Deploys linked to incidents |

| Deployment frequency | Prod deploys per service | Delivery capacity | CD logs |

| Onboarding time | New dev to first deploy | Hiring efficiency | Survey plus first deploy event |

| Toil hours | Manual ops per week | Opex and burnout | Time tracking sample or surveys |

Set targets per quarter. Keep them realistic. If you improve lead time but change failure rate spikes, you did not win.

Conclusion

Platform engineering is worth it when it reduces friction for self directed teams and makes production safer by default.

If you want a simple starting point, do this:

- Pick one golden path that covers a weekly workflow.

- Bake in policy as code and observability by default from day one.

- Standardize paved road CI/CD via templates, not mandates.

- Measure outcomes with a scorecard executives accept.

Insight: The platform team wins when feature teams stop talking about the platform. Not because they do not care, but because it quietly works.

Practical next steps you can assign this week:

- Inventory your top 10 sources of delivery toil (from retros and incident reviews)

- Define the minimum golden path: scaffold, pipeline, telemetry, runbook

- Set targets for lead time, MTTR, and change failure rate

- Publish your first requirements matrix and get two teams to validate it

If you do that, your internal developer platform stops being an initiative and becomes infrastructure your org can actually ship on.

Anti patterns to avoid

Platform work adds drag when it looks like this:

- A portal nobody needs, built before fixing CI and deploy basics

- Mandatory approvals for low risk changes

- Golden paths that only work for one team’s stack

- “Standardization” that breaks local dev and slows feedback loops

- Metrics that track output (tickets closed) instead of outcomes (lead time, MTTR)

Treat these as smoke alarms. If you see them, course correct fast.